Disclaimer

This article hasn’t been reviewed yet, and it’s still a work in progress.

If you’d like to help out with reviewing, feel free to drop me a message (see the About Page) or leave a comment.

I’ll be gradually adding more problems as time permits (I’m also working on Part 2).

Special thanks to:

meithecatte- for pointing one of the problem statements was incorrect.cryslith- for pointing out some mistakes in my comments and helping me correct a few solutions.- Gheorghe Craciun for inspiring and allowing me to borrow some of his problems.

If I forgot to include a source or mistakenly credited an exercise to the wrong author, please know it was unintentional. Some of the problems are original (created by me), but not all are signed, as the results are too elementary to require attribution. Similarly, some solutions are original, while others are based on official ones—just presented in slightly more detail.

Writing this short article made me nostalgic and brought back fond memories of my high school math teacher, Carmen Georgescu, who had a positive influence on me, as well as Gabriel Tica, who also inspired me when I was a young student.

Introduction

Inequalities are among the most “fascinating” and versatile topics in competitive mathematics because they challenge solvers to think creatively and intuitively. If you look at the IMO problem sets, you will find that inequality problems are almost always present, year after year.

Approaching an “inequality” problem requires more than just sheer “mathematical force” (although using techniques from Real Analysis can help); you need to take a step back and come up with clever manipulations, substitutions, and (sometimes) novel ideas. In essence, inequality problems blend “beauty” with “intellectual challenge”, and they are embodying so well the spirit of “competitive mathematics”.

I am a bad salesperson when it comes to selling mathematics, but the main idea is inequality problems are cool.

- For soccer lovers, solving a hard inequality problem feels like scoring a goal after a long dribbling.

- For video-games lovers, solving a hard inequality problem feels like figuring out a hard puzzle in: Machinarium or The Longest Journey. In the lack of contemporary examples.

- For chess-lovers, solving a hard inequality problem feels like solving a mate-in-six puzzle.

- For competitive programmers, solving a hard inequality feels like solving a hard problem on codeforces.

In case you haven’t seen one, this is what a hard inequality problems look like:

Let \(x_1, x_2, \dots x_n \in \mathbb{R}_{+}\), prove:

\[\sum_{i=1}^n \left[\frac{1}{1+\sum_{j=1}^i x_j}\right] \lt \sqrt{\sum_{i=1}^n \frac{1}{x_i}} \]

Source

The Romanian Math Olympiad, The National Phase.

Let \(a,b,c\) be positive real numbers. Prove that:

\[ \frac{a}{\sqrt{a^2+8bc}} + \frac{b}{\sqrt{b^2+8ac}} + \frac{c}{\sqrt{c^2+8ab}} \ge 1 \].

Source

2001 IMO Problems/Problem 2

It is generally unwise to label something as “hard” or “difficult,” especially in mathematics. However, considering that these problems are actual IMO challenges, it is reasonable to label them in this way.

The purpose of this article is to highlight some techniques and methods that can assist math hobbyists, novice problem solvers, and curious undergraduates in approaching seemingly difficult inequality problems. This writing will only touch upon a small portion of this expansive (debatable epithet) class of problems.

An edgy teacher, names excluded, once said: “In an ideal world people would solve inequality problems instead of Sudoku!”. We haven’t spoken since, I like Sudoku.

Inequations vs. Inequalities

There is a subtle distinction between an inequality and an inequation, although the terms are often used interchangeably in everyday mathematical language.

An inequation, a less common term, behaves just like a mathematical equation involving an inequality symbol. Inequations emphasize the algebraic problem-solving aspect of an inequality.

Those are inequations:

Find real numbers \(x\) for which the following "inequality" holds:

\[ \sqrt{3-x}-\sqrt{x+1} \gt \frac{1}{2} \]

Solution

Solution is left to reader. It's not terribly difficult, even it's an IMO problem. Also there is no "trickonometry" involved.

Source

IMO 1962

An inequation is all about finding solutions, while inequalities focus on the actual relationship between numbers, a statement of truth that applies for all numbers in a given domain.

The following are inequalities:

Problem IVI03 (The modulus inequality)

Let \(a, b\) real numbers. Prove that:

\[ |a+b|\le|a|+|b| \]

Solution

We identify 4 cases:

Both \(a\) and \(b\) are non-negative \((a\ge0, b\ge0)\):

\[|a+b|=a+b=|a|+|b| \]

Both \(a\) and \(b\) are non-positive \((a\le0, b\le0)\):

\[|a+b|=-(a+b)=-a+(-b)=|a|+|b|\]

-

\(a\) and \(b\) have opposite signs. Since the proof is almost identical, we assume \(a\ge0\) and \(b\le0\) and two sub-cases:

- If \(a+b\ge0\) then \(|a+b|=a+b \le a-b = |a|+|b|\).

- If \(a+b\le0\) then \(|a+b|=-a-b \le |a| + |b|\).

The equality holds when \(a\) and \(b\) have the same signs.

Now, let’s try to use the previous result (the modulus inequality) in a creative way:

Let \(a,b\) real numbers. Prove the inequality:

\[ | 1 + ab | + | a + b | \ge \sqrt{|a^2-1||b^2-1|} \]

Hint 1

Consider proving the identity:

\[ \left(a^2-1\right)\left(b^2-1\right) = (1+ab)^2 - (a+b)^2 \]

Hint 2

Consider the previous problem IVI03.

Solution

We begin by using the modulus inequality:

\[ |x| + |y| \geq |x + y|. \]

Applying this to our terms (when \(a+b\) is positive and \(a+b\) is negative), we obtain:

\[ |1+ab| + |a+b| \ge |1+ab+a+b| \] \[ |1+ab| + |a+b| \ge |1+ab-a-b| \]

Multiplying these two inequalities gives:

\[ \left(|1+ab|+|a+b|\right)^2 \geq |1+ab+a+b||1+ab-a-b| \Leftrightarrow \] \[ \left(|1+ab|+|a+b|\right)^2 \geq |(1+ab)^2-(a+b)^2| \Leftrightarrow \] \[ |1+ab|+|a+b| \geq \sqrt{(1+ab)^2-(a+b)^2} \]

Now, let's prove that:

\[ (1+ab)^2-(a+b)^2 = 1 + 2ab + (ab)^2 - a^2 - 2ab - b^2 = \] \[ = a^2(b^2-1)-(b^2-1) = (a^2-1)(b^2-1) \]

Substituting this result back into our inequality::

\[ |1+ab|+|a+b| \ge \sqrt{|a^2-1||b^2-1|} \]

Finally, we analyze the equality case. When does equality hold?

Source

Romanian Math Olympiad, 10th grade, 2008

Let \(x\in\mathbb{R}\). Prove that:

\[ x^2+x+1\gt0 \]

Hint 1

\(a^2\ge0, \forall a\in\mathbb{R}\)

Hint 2

Try multiplying both sides by 2 or completing the square.

Solution 1

We start by multiplying each side by 2:

\[ x^2+x^2+2x+1+1>0 \Leftrightarrow x^2+1+(x+1)^2 > 0 \]

This holds true because \(x^2+1\gt0\) and \((x+1)^2\ge0\).

Solution 2

We rewrite the expression as:

\[ x^2+x+1=x^2+2*x*\frac{1}{2}+(\frac{1}{2})^2+[1-(\frac{1}{2})^2]=(x+\frac{1}{2})^2+\frac{3}{4} \]

Since both terms are non-negative, we conclude that:

\[ (x+\frac{1}{2})^2+\frac{3}{4}>0 \]

Let \(a,b\) real numbers such that \(a+b \gt 0\), prove:

\[ \frac{a^2+b^2}{a+b} \geq \frac{a+b}{2} \]

Solution

We eliminate the denominators using cross-multiplication:

\[ \frac{a^2+b^2}{a+b} \geq \frac{a+b}{2} \Leftrightarrow 2\cdot(a^2+b^2) \geq (a+b)^2 \Leftrightarrow (a-b)^2 \geq 0 \]

The last inequality is always true for real numbers.

Let \(a,b\) real numbers, \(a+b \ge 0\), prove:

\[ \frac{a}{b^2}+\frac{b}{a^2} \ge \frac{1}{a}+\frac{1}{b} \]

Hint 1

\(\frac{1}{a}=\frac{a}{a^2}\)

Solution

We begin by rewriting the given inequality:

\[ \frac{a}{b^2}-\frac{b}{b^2}+\frac{b}{a^2}-\frac{a}{a^2} \ge 0 \]

Rearranging the terms:

\[ \frac{a-b}{b^2}-\frac{a-b}{a^2} \ge 0 \]

Factoring out \(a-b\):

\[ \frac{(a-b)^2*(a+b)}{(ab)^2} \ge 0 \]

Since we know that:

\[ (a-b)^2 \ge 0 , (ab)^2 \ge 0 \text{ and } a+b \ge 0 \]

It follows that:

\[ \Rightarrow \frac{(a-b)^2*(a+b)}{(ab)^2} \ge 0 \]

The equality holds if \(a=b\).

Keep in mind the following two inequalities, as they will be helpful when solving more complex problems:

Let \(x,y,z \in \mathbb{R}\). Prove that:

\[

x^2+y^2+z^2 \ge xy + yz + zx

\]

Try multiplying both sides by 2. Multiplying both sides by 2 and rearranging the terms: \((x-y)^2+(y-z)^2+(z-x)^2 \ge 0\) Since squares of real numbers are always non-negative, the inequality holds. Equality occurs when \(x=y=z\).Hint 1

Solution

Let \(a, b\) be positive real numbers. Prove that:

\[ a^3+b^3 \ge a^2b+ab^2 \]

Solution

Rearranging the given inequality:

\[ a^3+b^3-a^2b-ab^2 \ge 0 \]

Factoring step by step:

\[ a^2(a-b)+b^2(b-a) \ge 0 \Leftrightarrow \\ (a-b)(a^2-b^2) \ge 0 \Leftrightarrow \\ (a-b)(a-b)(a+b) \ge 0 \Leftrightarrow \\ (a-b)^2(a+b) \ge 0 \]

Since \((a-b)^2 \ge 0\) and \(a+b\ge0\), the inequality holds.

Equality occurs when \(a=b\).

For the next problem, consider applying an inequality we have already established.

Let \(a,b,c\) positive real numbers, such that \(abc=1\). Prove the following inequality:

\[ a^4+b^4+c^4 \geq a+b+c \]

Hint 1

\(a^4=\left(a^2\right)^2\)

Solution

We have already established that \(a^2+b^2+c^2\geq ab+bc+ca\). In this regard, let's make use of this fact:

\[ a^4+b^4+c^4 = \left(a^2\right)^2 + \left(b^2\right)^2 + \left(c^2\right)^2 \geq \left(ab\right)^2 + \left(bc\right)^2 + \left(ca\right)^2 \geq \] \[ \geq ab^2c + a^2bc + abc^2 = abc(a+b+c) = abc \]

Equality holds when \(a=b=c\) and \(abc=1\), so \(a=b=c=1\).

Source

Romanian Math Olympiad, Etapa Locala, 9th grade, Galati, 2004

In a similar fashion:

Let \(a,b,c\) positive real numbers such that \(abc=1\). Prove the inequality:

\[ \frac{a^3}{bc}+\frac{b^3}{ca}+\frac{c^3}{ab} \geq a+b+c \]

Solution

We start by rewriting the left-hand side over a common denominator:

\[ \frac{a^3}{bc}+\frac{b^3}{ca}+\frac{c^3}{ab} = \frac{a^4+b^4+c^4}{abc} = \] \[ = \frac{(a^2)^2+(b^2)^2+(c^2)^2}{abc} \tag{1} \]

In expression \((1)\), we apply the inequality \(x^2 + y^2 + z^2 \geq xy + yz + zx\) twice (see Problem IVI08), and use the assumption \(abc = 1\):

\[ \frac{(a^2)^2+(b^2)^2+(c^2)^2}{abc} \geq \frac{a^2b^2+b^2c^2+c^2a^2}{abc} \geq \] \[ \geq \frac{abbc+bcca+caab}{abc} = a+b+c \]

Thus, the inequality is proven. Equality holds when \(a = b = c\).

Do you know how to factor your symmetric polynomials ?

For \((x,y)\neq(0,0)\), prove:

\[ x^4+x^3y+x^2y^2+xy^3+y^4\gt0 \]

Hint 1

Did you know about the following identity?

\[ x^n-y^n=(x-y)(x^{n-1}+x^{n-2}y+\dots+xy^{n-2}+y^{n-1}) \]

Solution

Using the identity \(x^n-y^n=(x-y)(x^{n-1}+x^{n-2}y+\dots+xy^{n-2}+y^{n-1}) \), and assuming \(x\neq y\), we can rewrite the given expression as:

\[ x^4+x^3y+x^2y^2+xy^3+y^4 = \frac{x^5-y^5}{x-y} \]

Since \(5\) is an odd number, both \(x^5-y^5\) and \(x-y\) will always have the same sign. This ensures the left-hand side is always positive.

If \(x=y\), the expression simplifies to:

\[x^4+x^3y+x^2y^2+xy^3+y^4=4x^4\]

Thus, the inequality is always true.

I wouldn’t call the next problem a “fundamental” result, but it’s definitely a useful trick that I’ve seen applied to solve at least two or three problems in various math competitions:

Let \(x\) a positive real number such that \(x\gt1\), prove that:

\[ \sqrt{x} \gt \frac{1}{\sqrt{x+1}-\sqrt{x-1}} \]

Solution

To simplify the denominator, multiply by the conjugate expression:

\[ \sqrt{x} \gt \frac{\sqrt{x+1}+\sqrt{x-1}}{(\sqrt{x+1}+\sqrt{x-1})(\sqrt{x+1}-\sqrt{x-1})} \Leftrightarrow \\ \sqrt{x} \gt \frac{\sqrt{x+1}+\sqrt{x-1}}{2} \]

Squaring both sides gives:

\[ x \gt \frac{(\sqrt{x+1}+\sqrt{x-1})^2}{4} = \frac{2x + 2\sqrt{x^2-1}}{4} \]

After simplifying further::

\[ x > \sqrt{x^2-1} \Leftrightarrow x^2 > x^2 - 1. \]

Since \( x > 1 \), the inequality holds true for all \( x > 1 \), completing the proof.

The following two problems have similar solutions. The key idea is to bound each term between two fixed values.

Let \(n \in \mathbb{N}\) and \(n \gt 1\). Prove that:

\[\frac{1}{2}\lt\frac{1}{n+1}+\frac{1}{n+2}+\dots+\frac{1}{2n}\lt\frac{3}{4}\]

Hint 1

\(\frac{1}{n+j}\gt\frac{1}{2n}, \forall j \lt n\)

Solution

To find a lower bound, since each term satisfies \(\frac{1}{n+j} \ge \frac{1}{2n}\), we sum these inequalities:

\[ \frac{1}{n+1}+\frac{1}{n+2}+\dots+\frac{1}{2n}\gt\frac{1}{2n}+\frac{1}{2n}+\dots+\frac{1}{2n}=\frac{1}{2} \]

To find the upper bound, we rewrite the sum in symmetric way:

\[ \frac{1}{n+1}+\frac{1}{n+2}+\dots+\frac{1}{2n}=\frac{1}{2}[(\frac{1}{n}+\frac{1}{2n})+(\frac{1}{n+1}+\frac{1}{2n-1})+\dots] \]

Approximating each term:

\[ \frac{1}{2}[\frac{3n}{2n^2}+\frac{3n}{2n^2+(n-1)}+\dots] < \frac{1}{2}[\frac{3n}{2n^2}+\dots] \]

Summing these fractions:

\[ \frac{3}{4} + \frac{1}{n} < \frac{3}{4} \]

Thus, we have:

\[ \frac{1}{2} \lt \sum_{j=1}^n \frac{1}{n+j} \lt \frac{3}{4} \]

which completes the proof.

Source

This problem is taken from "Culegere de probleme pentru liceu" by Năstăsescu, Niță, Brandiburu, and Joița (1997), a popular mathematics book during my high school years.

Let \(n\in\mathbb{N}^{*}\setminus\{1\}\). Prove that:

\[ \frac{1}{2} \lt \frac{1}{n^2+1} + \frac{2}{n^2+2} + \frac{3}{n^2+3} + \dots + \frac{n}{n^2+n} \lt \frac{1}{2} + \frac{1}{2n} \]

Hint 1

Note that:

\[\frac{1}{n^2+1} \lt \frac{1}{n^2}\]

What can we infer about \(\frac{2}{n^2+2}\)?

Hint 2

On the other hand: \(\frac{1}{n^2+1} \gt \frac{1}{n^2+n}\). What can we deduce about \(\frac{2}{n^2+2}\)?

Solution

First, notice that for each term in the sum, we can replace the denominator with the smaller value to obtain a valid upper bound. Hence, we have:

\[ \frac{1}{n^2+1} + \frac{2}{n^2+2} + \frac{3}{n^2+3} + \dots + \frac{n}{n^2+n} \lt \frac{1}{n^2}+\dots+\frac{n}{n^2} \]

This simplifies to:

\[ \frac{1}{n^2+1} + \frac{2}{n^2+2} + \frac{3}{n^2+3} + \dots + \frac{n}{n^2+n} \lt \frac{1}{n^2}(1+2+\dots+n) = \frac{1}{2}+\frac{1}{2n} \]

Thus, we have the upper bound: \(\frac{1}{2} + \frac{1}{n}\).

Now, we replace each term by its corresponding larger denominator to obtain a lower bound. Specifically, we have:

\[ \frac{1}{n^2+1} + \frac{2}{n^2+2} + \frac{3}{n^2+3} + \dots + \frac{n}{n^2+n} \gt \\ \gt \frac{1}{n^2+n} + \frac{2}{n^2+n} + \dots + \frac{n}{n^2+n} \]

Factoring out \(\frac{1}{n^2+n}\), this simplifies to:

\[ \frac{1}{n^2+1} + \frac{2}{n^2+2} + \frac{3}{n^2+3} + \dots + \frac{n}{n^2+n} \gt \\ \gt \frac{1}{n^2+n}(1+2+\dots+n) = \frac{1}{2} \]

Thus we have a lower bound: \(\frac{1}{2}\).

Combining those results:

\[ \frac{1}{2} \lt \sum_{j=1}^{n} \frac{j}{n^2+j} \lt \frac{1}{2} + \frac{1}{2n} \]

Source

RMO-2002, India

There is something elegant about the next problem:

Prove that for any \(x \in \mathbb{R}_{+}\) the following inequality is true:

\[ \sqrt{x^2+x+\sqrt{x^2+x+\sqrt{x^2+x+\sqrt{x^2+x+\dots+\sqrt{x^2+x}}}}} < x+1 \]

\(n\) is the number of subsequent "radicals".

Hint 1

Try defining the expression recursively after squaring both sides.

Solution

We observe that squaring both sides of the inequality preserves its structure, regardless of the number of nested radicals \(n\):

\[ x^2+x+\sqrt{x^2+x+\sqrt{x^2+x+\sqrt{x^2+x+\dots+\sqrt{x^2+x}}}} < (x+1)^2 \Leftrightarrow \] \[ \sqrt{x^2+x+\sqrt{x^2+x+\sqrt{x^2+x+\dots+\sqrt{x^2+x}}}} < x+1 \]

So, in essence, we need to verify that the base case of the nested radical satisfies the inequality:

\[ \sqrt{x^2+x} < x^2 + 1 \Leftrightarrow x+1 > 0 \]

Since \(x > 0\), the last inequality clearly holds. Therefore, the original inequality is valid.

Source

D.M. Bătineţu-Giurgiu

The following are the first non-trivial challenges in this article that can be solved without using advanced techniques or inequalities. Try using the provided hints before checking the full solution.

Let \(a_1, a_2, \dots, a_n\) positive real numbers such that \(\sum_{i=1}^{2009}a_i = 2009\). Prove the inequality:

\[ \frac{a_1^2+a_2^2}{a_1+a_2}+\frac{a_2^2+a_3^2}{a_2+a_3}+\dots+\frac{a_{2009}^2+a_1^2}{a_{2009}+a_1} \geq 2009 \]

Hint 1

We have already proven the inequality: \[\frac{a^2+b^2}{a+b}\geq\frac{a+b}{2}\]

Solution

From a previous exercise, we know that: \[\frac{a^2+b^2}{a+b}\geq\frac{a+b}{2}\]

Even if we do not remember the exact result, it is natural to try to bound each term individually.

We apply the inequality to each term in the given sum:

\[ \frac{a_1^2+a_2^2}{a_1+a_2}+\frac{a_2^2+a_3^2}{a_2+a_3}+\dots+\frac{a_{2009}^2+a_1^2}{a_{2009}+a_1} \geq \]

Note that each term \( a_i \) appears exactly twice on the right-hand side, and each time with a factor of \( \frac{1}{2} \). Thus, the total becomes:

\[ \frac{a_1+a_2}{2}+\dots+\frac{a_{2009}+a_1}{2} = \sum_{i=1}^{2009}a_i = 2009 \]

Therefore, the inequality is proven.

Source

Romanian Math Olympiad, Etapa Locala, 9th grade, Galati, 2009

Let \(a,b,c\) positive real numbers such that: \(abc=1\). Prove that:

\[ \frac{1}{a^3+b^3+1}+\frac{1}{b^3+c^3+1}+\frac{1}{c^3+a^3+1} \leq 1 \]

Hint 1

Try using the inequality \( a^3 + b^3 \geq ab(a + b) \), and use the fact that \( abc = 1 \) to simplify.

Solution

We begin by applying the inequality \( a^3 + b^3 \geq ab(a + b) \). This gives:

\[ \frac{1}{a^3+b^3+1}+\frac{1}{b^3+c^3+1}+\frac{1}{c^3+a^3+1} \leq \] \[ \frac{1}{ab(a+b)+abc}+\frac{1}{bc(b+c)+abc}+\frac{1}{ca(c+a)+abc} = \] \[ \frac{1}{ab(a+b+c)}+\frac{1}{bc(a+b+c)}+\frac{1}{ca(a+b+c)} \]

Now, since \( abc = 1 \), we can substitute \( a = \frac{1}{bc} \), \( b = \frac{1}{ca} \), and \( c = \frac{1}{ab} \), which implies:

\[ \frac{1}{a^3+b^3+1}+\frac{1}{b^3+c^3+1}+\frac{1}{c^3+a^3+1} \leq \] \[ \frac{c}{a+b+c}+\frac{a}{a+b+c}+\frac{b}{a+b+c} = 1 \]

Therefore, the original inequality holds.

Source

Romanian Math Olympiad, Etapa Locala, 8th grade, Galati, 2017

Let \(a,b,c\) positive real numbers such that \(abc=1\). Prove that:

\[ 2\left(\frac{a}{b}+\frac{b}{c}+\frac{a}{a}\right) \geq \frac{1}{a} + \frac{1}{b} + \frac{1}{c} + a + b + c \]

Note

This problem can be approached using more advanced techniques, which will be introduced later in this text. However, it can also be solved using elementary algebraic methods and known inequalities.

Hint 1

Since \( abc = 1 \), try expressing one variable in terms of the others, for example \( c = \frac{1}{ab} \), and reduce the inequality to a two-variable expression.

Hint 2

Recall the inequality:

\[ a^3+b^3 \geq ab(a+b) = ab^2+a^2b \]

Solution

Using the condition \( abc = 1 \), substitute \( c = \frac{1}{ab} \) into the original inequality. The left-hand side becomes:

\[ 2\left(\frac{a}{b} + ab^2 + \frac{1}{a^2b}\right) \geq \frac{1}{a} + \frac{1}{b} + a + b + \frac{1}{ab} \]

We now simplify both sides to a common form. Multiply through as needed to bring all terms to a common denominator. After simplification and rearrangement, the inequality becomes:

\[ 2(a^3+a^3b^3+1)\geq ab+a^2+a^3b^2+a^3b+a^2b^2+a \Leftrightarrow \] \[ (a^3-a^2-a+1)+(a^3b^3-ab-a^2b^2+1)+a^3(b^3-b^2-b+1) \geq 0 \Leftrightarrow \] \[ \underbrace{\left[(a^3+b^3)-a(a+1)\right]}_{\geq 0} + \underbrace{\left[(a^3b^3+1)-ab(ab+1)\right]}_{\geq 0} + \] \[ + \underbrace{a^3\left[(b^3+1)-b(b+1)\right]}_{\geq 0} \geq 0 \]

Now we estimate each group using known inequalities. Recall that:\[a^3 + b^3 \geq ab(a + b)\]

And notice that each grouped expression is of a similar structure, with the general form \( x^3 + y^3 \geq xy(x + y) \). Therefore, each term is non-negative.

Source

Romanian Math Olympiad, Etapa Locala, 8th grade, Galati, 2009

Prove that for each positive integer \(n \gt 1\):

\[ \sqrt{n+1}+\sqrt{n}-\sqrt{2} \gt 1 + \frac{1}{\sqrt{2}} + \frac{1}{\sqrt{3}} + \dots + \frac{1}{\sqrt{n}} \]

Hint 1

In a previous problem, we proved the following inequality for \( x > 1 \):

\[ \sqrt{x} \gt \frac{1}{\sqrt{x+1}-\sqrt{x-1}} \]

Can you find a way to apply this result to solve the problem?

The idea of using a smaller, known result to prove a more general problem (inequality) is a common and powerful strategy in mathematical problem-solving.

Solution

In a previous exercise, we have already established that:

\[ \sqrt{x} \gt \frac{1}{\sqrt{x+1}-\sqrt{x-1}} \]

Rearranging, this gives:

\[ \sqrt{x+1}-\sqrt{x-1} \gt \frac{1}{\sqrt{x}} \]

Applying this for \( x = 2,3, \dots, n \), we obtain:

\[ \begin{cases} \frac{1}{\sqrt{2}} \lt \sqrt{3}-\sqrt{1} \\ \frac{1}{\sqrt{3}} \lt \sqrt{4}-\sqrt{2} \\ \frac{1}{\sqrt{4}} \lt \sqrt{5}-\sqrt{3} \\ \dots \\ \frac{1}{\sqrt{n}} \lt \sqrt{n+1}-\sqrt{n-1} \end{cases} \]

Summing all these inequalities from \( x = 2 \) to \( x = n \), we get:

\[ (\sqrt{3} - \sqrt{1}) + (\sqrt{4} - \sqrt{2}) + (\sqrt{5} - \sqrt{3}) + \dots + (\sqrt{n+1} - \sqrt{n-1}) > \sum_{i=2}^{n} \frac{1}{\sqrt{i}}. \]

Observing the left-hand side, all intermediate terms cancel, leaving us with:

\[ \sqrt{n+1} + \sqrt{n} - \sqrt{2} -1 > \sum_{i=2}^{n} \frac{1}{\sqrt{i}} \Leftrightarrow \] \[ \sqrt{n+1} + \sqrt{n} - \sqrt{2} > 1 + \sum_{i=2}^{n} \frac{1}{\sqrt{i}}. \]

Thus, the inequality is proven.

Source

Australian Math Olympiad, 1987 (?)

Let \(x,y,z\) positive real numbers. Prove the following inequality:

\[ \frac{1}{x\sqrt{x}+y\sqrt{y}+\sqrt{xyz}} + \frac{1}{y\sqrt{y}+z\sqrt{z}+\sqrt{xyz}}+\frac{1}{z\sqrt{z}+x\sqrt{x}+\sqrt{xyz}} \leq \frac{1}{\sqrt{xyz}} \]

Hint 1

Expressions with radicals often become simpler if you eliminate the roots through substitutions.

Hint 2

Look for a known or previously proven inequality that could simplify the denominators.

Hint 3

You may find the following inequality useful:

\[ a^3+b^3 \geq ab(a+b) \]

Solution

To "remove" the radicals, make the substitutions:

\[ \sqrt{x} \rightarrow a, \quad \sqrt{y} \rightarrow b, \quad \sqrt{z} \rightarrow c \]

The given inequality becomes:

\[ \begin{align} \frac{1}{a^3+b^3+abc}+\frac{1}{b^3+c^3+abc}+\frac{1}{c^3+a^3+abc} \leq \frac{1}{abc} \tag{1} \end{align} \]

From a prior problem (or a standard inequality):

\[ \begin{align} a^3+b^3 \geq ab(a+b) \Leftrightarrow (a-b)^2(a+b) \geq 0 \tag{2} \end{align} \]

Applying \((2)\) for each denominator in \((1)\):

\[ \frac{1}{a^3+b^3+abc}+\frac{1}{b^3+c^3+abc}+\frac{1}{c^3+a^3+abc} \leq \] \[ \leq \frac{1}{ab(a+b)+abc}+\frac{1}{bc(b+c)+abc}+\frac{1}{ca(c+a)+abc} = \] \[ = \frac{1}{ab(a+b+c)}+\frac{1}{bc(a+b+c)}+\frac{1}{ca(a+b+c)} = \] \[ = \frac{c}{abc(a+b+c)}+\frac{a}{abc(a+b+c)} + \frac{b}{abc(a+b+c)} = \] \[ = \frac{(a+b+c)}{(a+b+c)abc} = \frac{1}{abc} \]

Thus we obtain the desired inequality.

Equality holds when \(a=b=c\), or when \(x=y=z\).

Source

Romanian Math Olympiad, Etapa Locala, 9th grade, Timis, 2013

Let \(a,b,c\) positive real numbers such that \(ab+bc+ca=1\). Prove the inequality:

\[ \frac{1}{a+b}+\frac{1}{b+c}+\frac{1}{c+a} \ge \sqrt{3} + \frac{ab}{a+b} + \frac{bc}{b+c} + \frac{ca}{c+a} \]

Hint 1

Rearrange the inequality so that all terms involving \(a, b,\) and \(c\) are on the left-hand side.

Hint 2

Use the given condition \(ab+bc+ca=1\) to rewrite the expressions \(1 - ab\), \(1 - bc\), and \(1 - ca\) in terms of \(a, b,\) and \(c\).

Solution

Rewriting the given inequality by moving all terms involving \(a, b,\) and \(c\) to the left-hand side, we obtain:

\[ \frac{1 - ab}{a+b}+\frac{1 - bc}{b+c}+\frac{1 - ca}{c+a} \geq \sqrt{3}. \]

Since \(ab+bc+ca=1\), we use the following identities:

\[ 1 - ab = bc + ca, \quad 1 - bc = ab + ca, \quad 1 - ca = ab + bc. \]

Substituting these into the inequality, we get:

\[ \frac{bc+ca}{a+b} + \frac{ab+ca}{b+c} + \frac{ab+bc}{c+a} \geq \sqrt{3}. \]

Rewriting each fraction:

\[ \frac{a(b+c)}{b+c} + \frac{b(c+a)}{c+a} + \frac{c(a+b)}{a+b} \geq \sqrt{3}. \]

Since each term simplifies we conclude:

\[ a + b + c \overbrace{\geq}^{?} \sqrt{3}. \]

Squaring both sides, we obtain:

\[ (a + b + c)^2 \geq 3. \]

Expanding the left-hand side:

\[ a^2 + b^2 + c^2 + 2(ab + bc + ca) \geq 3. \]

Since \(ab + bc + ca = 1\), substituting this yields:

\[ a^2 + b^2 + c^2 + 2 \geq 3. \]

Finally, using the well-known inequality \(a^2 + b^2 + c^2 \geq ab + bc + ca = 1\), we add \(2\) to both sides:

\[ a^2 + b^2 + c^2 + 2 \geq 3. \]

This confirms the inequality.

Source

Romanian Math Olympiad, 9th grade, 2002

Let \(a,b,c\) positive real numbers such that \(a+b+c\le4\) and \(ab+bc+ca\ge4\). Prove that at least two of the following inequalities must hold at all times:

\[ \begin{cases} |a-b| \le 2\\ |b-c| \le 2\\ |c-a| \le 2 \end{cases} \]

Hint 1

Try expanding \((a+b+c)^2\)

Hint 2

Starting with \( (a+b+c)^2 \le 16 \), can you derive the alternate form: \( (a-b)^2 + (b-c)^2 + (c-a)^2 \le 8 \)

Solution

Since \(a+b+c\le4\) we have \((a+b+c)^2 \le 16\).

Expanding \((a+b+c)^2\) gives:

\[ (a+b+c)^2 \le 16 \Leftrightarrow \\ a^2+b^2+c^2 + 2(ab+bc+ca) \le 16 \Leftrightarrow \\ a^2+b^2+c^2 \le 8 \Leftrightarrow \\ a^2+b^2+c^2 - ab - bc - ca \le 4 \Leftrightarrow \\ a^2 - 2ab + b^2 + b^2 - 2bc + c^2 + c^2 - 2ca + a^2 \le 8 \Leftrightarrow \\ (a-b)^2 + (b-c)^2 + (c-a)^2 \le 8 \]

Now, suppose \(|a-b| \le 2\) and \(|b-c| \le 2\) are false. This would mean that \( |a - b| > 2 \) and \( |b - c| > 2 \), which leads to a contradiction.

Source

This problem is adapted from the book: T. Andreescu, Z. Feng - 101 Problems in Algebra (Korean Mathematics Competition, 2001).

Weak Inequalities vs. Strict inequalities

Weak inequalities are inequalities that allow for the possibility of equality. . They are typically denoted by the symbols \(\ge\) or \(\le\). In contrast, strict inequalities, use \(\gt\) and \(\lt\) and they don’t permit equality.

A renaissance way to grasp the concept of a weak inequality is to think of the “finger of God” touching Adam’s hand. In this metaphor, a strict inequality is represented by the following painting, as it depicts a situation that never occurs — at least not in olam ha-ze (this world).

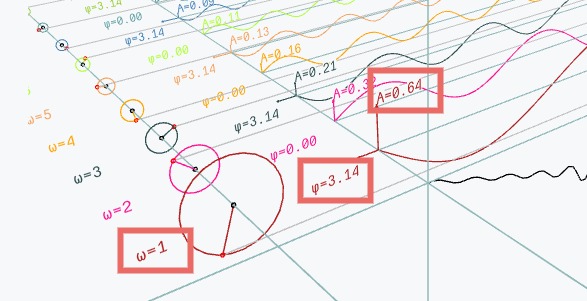

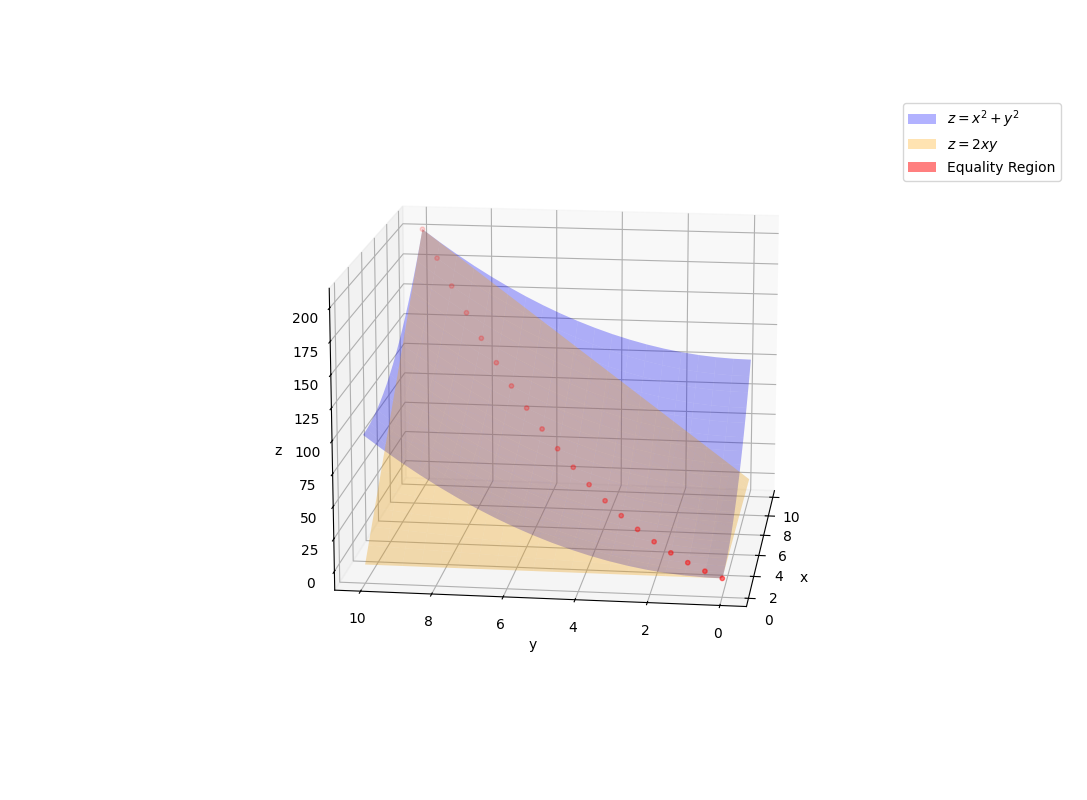

From a mathematical standpoint, we know, for example, that \(x^2+y^2\ge2xy\). This inequality is always true because \((x-y)^2\ge0\). If we plot \(x^2+y^2\), and \(2xy\), we will a see thin line where the graphical representation “touch”. This red line is key to solving many problems in physics and engineering. It is specific to weak inequalities.

All in all, the main idea is that weak inequalities are more interesting than strict inequalities.

Being playful with algebraic identities

Before delving into specific inequalities, it’s important to highlight a few key identities that problem creators frequently use when designing challenges. These identities are not only essential for understanding inequalities but also serve as powerful tools for solving a variety of other problems.

Some of my favorite identities are:

- \(\hspace{1cm} 2(x^2+y^2)=(x+y)^2+(x-y)^2 \)

- \(\hspace{1cm} x^3+y^3=(x+y)(x^2-xy+y^2) \)

- \(\hspace{1cm} x^3-y^3=(x-y)(x^2+xy+y^2) \)

- \(\hspace{1cm} x^n-y^n=(x-y)(x^{n-1}+x^{n-2}y+\dots+xy^{n-1}+y^n) \)

- \(\hspace{1cm} 2(xy+yz+zx)=(x+y+z)^2-(x^2+y^2+z^2) \)

- \(\hspace{1cm} 3(x+y)(y+z)(z+x)=(x+y+z)^3-(x^3+y^3+z^3) \)

- \(\hspace{1cm} (x+y)(y+z)(z+x)=(x+y+z)(xy+yz+zx)-xyz \)

- \(\hspace{1cm} x^3+y^3+z^3-3xyz=(x+y+z)(x^2+y^2+z^2-xy-yz-zx) \)

- \(\hspace{1cm} (\sqrt{\frac{a}{b}}+\sqrt{\frac{b}{a}})^2 = (a+b)(\frac{1}{a}+\frac{1}{b})\)

- \(\hspace{1cm} \frac{x}{(x-y)(x-z)}+\frac{y}{(y-x)(y-z)}+\frac{z}{(z-x)(z-y)}=0 \)

- \(\hspace{1cm} \frac{x^2}{(x-y)(x-z)}+\frac{y^2}{(y-x)(y-z)}+\frac{z^2}{(z-x)(z-y)}=1 \)

- \(\hspace{1cm} \frac{x^3}{(x-y)(x-z)}+\frac{y^3}{(y-x)(y-z)}+\frac{z^3}{(z-x)(z-y)}=x+y+z \)

Should you memorize all of these identities? It depends. If you’re actively participating in contests, I believe it’s worth memorizing them. Otherwise, simply being aware of their existence is sufficient. When you come across similar structures, check if these identities can help you. In a contest, you can present them as lemmas, and for clarity, it’s advisable to offer brief proofs. Fortunately, the proofs are typically straightforward, relying on simple algebraic manipulations.

For example, consider the following problems:

Let \(x,y,z \in \mathbb{R}^{*}\), where \(x< y < z\), and \(\frac{x^2}{yz}+\frac{y^2}{xz}+\frac{z^2}{xy}=3\). Prove that the arithmetic mean of \(x,y,z\) is 0.

Hint 1

Multiply both sides with \(xyz\).

Hint 2

Use the identity:

\(x^3+y^3+z^3-3xyz=(x+y+z)(x^2+y^2+z^2-xy-yz-zx)\)

Solution

The arithmetic mean of \(x,y,z\) is \(\frac{x+y+z}{3}=0\). Thus, we will need to prove that \(x + y + z = 0\).

Multiplying both sides by \(xyz\), the given expression becomes:

\[x^3+y^3+z^3-3xyz=0\]

Using the identity from Hint 2, we can conclude that:

\[0=(x+y+z)(x^2+y^2+z^2-xy-yz-zx)\]

This implies one of the following two cases: \[ \begin{cases} x+y+z=0 \text{ or} \\ x^2+y^2+z^2-xy-yz-zx=0 \\ \end{cases} \]

Now, let's consider the second case:

\[ x^2+y^2+z^2-xy-yz-zx=0 \Leftrightarrow \\ 2x^2+2y^2+2z^2-2xy-2yz-2zx=0 \Leftrightarrow \\ x^2-2xy+y^2+y^2-2yz+z^2+z^2-2zx+x^2=0 \Leftrightarrow \\ (x-y)^2+(y-z)^2+(z-x)^2=0 \]

This implies that \((x-y)=0\), \((y-z)=0\) and \((z-x)=0\) leading to \(x = y = z\).

However, this contradicts the assumption that \(x < y < z\). Therefore, the only valid conclusion is that \(x + y + z = 0\).

Source

Andrei N. Ciobanu

Find all pairs of distinct non-negative natural numbers \((x,y)\) such that:

\[x^3+y^3=(x+y)^2\]

Hint 1

Look for a suitable identity that can help simplify the expression.

Hint 2

Use the identity:

\[x^3+y^3=(x+y)(x^2-xy+y^2)\]

Solution

Using the identity \(x^3 + y^3 = (x + y)(x^2 - xy + y^2)\), we can rewrite the equation as:

\[x+y=x^2-xy+y^2\]

Next, we rearrange the terms to form a quadratic equation in \(x\):

\[ x^2-(y+1)x+(y^2-y)=0 \]

Now, we compute the discriminant, \(\Delta\), under the condition that \(\Delta \geq 0\):

\[ \Delta = -3y^2+6y+1 \geq 0 \]

This inequality simplifies to:

\[ \frac{3-2\sqrt{3}}{3} \le y \le \frac{3+2\sqrt{3}}{3} \]

The possible values of \(y\) that satisfy this condition are \(y = 1, 2\). Substituting these values back into the equation gives the pairs of solutions:

\[ (x, y) = (1, 0), (0, 1), (1, 2), (2, 1), (2, 2). \]

Source

This problem was sourced and adapted from the book: T. Andreescu, Z. Feng - 101 Problems in Algebra

This wasn’t an inequality problem, but similar structures can arise in various contexts. Knowing your identities can significantly reduce the effort required to solve a problem.

If you enjoyed the previous problem, give the next one a try:

Let \(x,y,z \in \mathbb{R}\), and assume that \((x+y+z)^3=x^3+y^3+z^3\). Prove that for all \(n \in \mathbb{N}\), the following holds:

\[(x+y+z)^{2n+1}=x^{2n+1}+y^{2n+1}+z^{2n+1}\]

Hint 1

Can you identify and use an identity that connects \((x + y + z)^3\) with \(x^3 + y^3 + z^3\)?

Hint 2

Use the fact that \( (x + y + z)^3 - (x^3 + y^3 + z^3) = 3(x + y)(y + z)(z + x) \).

Solution

No solution has been provided here. If you'd like, you can email me for a solution.

Source

This problem was sourced from "Culegere de probleme pentru liceu," by Nastasescu, Nita, Brandiburu, and Joita, 1997, a popular math book during my high school years.

The AM-GM Inequality

The AM (Arithmetic Mean) - GM (Geometric Mean) is a fundamental result in algebra that states:

For any set of non-negative real numbers \(a_1, a_2, \dots , a_n\) the arithmetic mean is always greater than or equal to the geometric mean:

\[ \frac{a_1+a_2+\dots+a_n}{n} \ge \sqrt[n]{a_1*a_2*\dots*a_n} \]

Or:

\[ \sum_{i=1}^n a_i \ge n \sqrt[n]{\prod_{i=1}^n a_i} \]

The equality holds, if, and only if \(a_1=a_2=\dots=a_n\).

For \(n=2\) the inequality can be written as: \(\frac{a+b}{2} \ge \sqrt{ab}\).

For \(n=3\) the inequality can be written as: \(\frac{a+b+c}{3} \ge \sqrt[3]{abc}\).

An interesting case arises when \(\prod_{i=1}^na_i=1\). In this situation, the inequality gives us: \(\sum_{i=1}a_i \ge n\), which means the sum of the numbers is always greater than or equal to \(n\) (the number of numbers).

With that in mind, let’s move on to the following problems:

Let \(x \in \mathbb{R}_{+}\) prove that:

\[x+\frac{1}{x} \ge 2\]

Solution

To prove the inequality, we apply the Arithmetic Mean - Geometric Mean (AM-GM) inequality to the numbers \(x\) and \(\frac{1}{x}\)::

\[ \frac{x+\frac{1}{x}}{2} \ge \sqrt{x*\frac{1}{x}} \Leftrightarrow x + \frac{1}{x} \ge 2 \]

Equality holds when \(x = \frac{1}{x}\), which implies \(x = 1\).

Now, let’s extend this concept by solving the following problem:

Let \(x_1,x_2,\dots,x_n \in \mathbb{R}_{+}\). Prove that:

\[S=\frac{x_1}{x_2}+\frac{x_2}{x_3}+\dots+\frac{x_{n-1}}{x_n}+\frac{x_n}{x_1} \ge n \]

Hint 1

What happens if you multiply each term in the sum?

Solution

To prove the inequality, we apply the AM-GM Inequality:

\[ \frac{S}{n} \ge \sqrt[n]{\frac{x_1}{x_2}\frac{x_2}{x_3}\dots\frac{x_n}{x_1}} \Leftrightarrow S \ge n*\sqrt[n]{1} \Leftrightarrow S \ge n \]

Equality holds if \(x_1 = x_2 = \dots = x_n\).

With a bit of creativity, you can solve the next problem in a manner similar to the previous one.

Let \(n\) be a positive integer greater than \(1\). Show that:

\[ \frac{1}{n}+\frac{1}{n+1}+\frac{1}{n+2}+\dots+\frac{1}{2n-1} \gt n \left(\sqrt[n]{2} - 1\right) \]

Hint 1

The inequality can be rewritten in an equivalent form:

\[ \frac{1}{n}\left(n+\frac{1}{n}+\frac{1}{n+1}+\dots+\frac{1}{2n-1}\right) \gt \sqrt[n]{2} \]

Hint 2

Consider splitting \(n\) as a sum of ones:

\[ \frac{1}{n}\left(\underbrace{1+1+\dots+1}_{n}+\frac{1}{n}+\frac{1}{n+1}+\dots+\frac{1}{2n-1}\right) \gt \sqrt[n]{2} \]

Solution

We start by rewriting the given inequality::

\[ \frac{1}{n}+\frac{1}{n+1}+\dots+\frac{1}{2n-1} \gt n \left(\sqrt[n]{2} - 1\right) \Leftrightarrow \\ \]

Which is equivalent to:

\[ \frac{1}{n}\left(n+\frac{1}{n}+\frac{1}{n+1}+\dots+\frac{1}{2n-1}\right) \gt \sqrt[n]{2} \]

Now, split \(n\) into a sum of ones and distribute them across the terms inside the parentheses:

\[ \frac{1}{n}*\left[\left(1+\frac{1}{n}\right)+\left(1+\frac{1}{n+1}\right) + \dots + \left(1+\frac{1}{2n-1}\right)\right] \gt \sqrt[n]{2} \Leftrightarrow \\ \frac{1}{n}*\left( \frac{n+1}{n} + \frac{n+2}{n+1} + \dots + \frac{2n}{2n-1} \right) \overbrace{\gt}^{AM-GM} \sqrt[n]{\frac{n+1}{n}*\dots*\frac{2n}{2n-1}} \gt \sqrt[n]{2} \]

This proves our inequality. Equality would hold for \(n=1\), which is not possible given the constraints.

Source

Australian Math Olympiad, 1992

Do you know your Partial fraction decomposition ?

Prove that \(\forall n \in [5,\infty) \cap \mathbb{N}\):

\[ \frac{1}{1*3}+\frac{1}{3*5}+\dots+\frac{1}{(n-2)n}\gt\frac{1}{\sqrt{n}}-\frac{1}{n} \]

Hint 1

Did you know that you can express the following sum as:

\[ \frac{1}{1*2}+\frac{1}{2*3}+\frac{1}{3*4}+\dots+\frac{1}{(n-1)n} = \\ = \frac{2-1}{1*2}+\frac{3-2}{2*3}+\dots+\frac{n-(n-1)}{(n-1)n} = \\ = \frac{1}{1}-\frac{1}{2}+\frac{1}{2}-\frac{1}{3}+\frac{1}{3}-\dots-\frac{1}{n}=1-\frac{1}{n} \]

Hint 2

How can we use the relationship from Hint 1 to our advantage?

Solution

We start by manipulating the sum:

\[ \frac{1}{1*3}+\frac{1}{3*5}+\dots+\frac{1}{(n-2)n} \gt \frac{1}{\sqrt{n}}-\frac{1}{n} \]

This simplifies to:

\[ \frac{1}{2}\Bigl[\frac{3-1}{1*3}+\frac{5-3}{3*5}+\dots+\frac{n-(n-2)}{(n-2)n}\Bigl] \gt \frac{1}{\sqrt{n}}-\frac{1}{n} \Leftrightarrow \\ \frac{1}{2}\Bigl(1-\frac{1}{3}+\frac{1}{3}-\frac{1}{5}+\frac{1}{5}-\dots-\frac{1}{n}\Bigl) \gt \frac{1}{\sqrt{n}}-\frac{1}{n} \Leftrightarrow \\ \frac{1}{2}-\frac{1}{2n}+\frac{1}{n} \gt \frac{1}{\sqrt{n}} \]

We then obtain:

\[ \frac{1}{2}+\frac{1}{2n} \gt \frac{1}{\sqrt{n}} \Leftrightarrow \\ \frac{1+\frac{1}{n}}{2} \gt \sqrt{1*\frac{1}{n}} \]

The equality holds only when \(n = 1\), but this is not an acceptable value for \(n\).

What if you apply the AM-GM inequality twice?

Let \(a,b\) positive real numbers, prove:

\[ a^4+b^4 \geq 2\sqrt{2}ab^2-1 \]

Solution

To solve this problem we need to apply the AM-GM inequality twice, in the following manner:

\[ (a^4+1)+b^4 \overbrace{\geq}^{\text{AM-GM}} 2a^2+b^4 \overbrace{\geq}^{\text{AM-GM}} 2b^2a\sqrt{2} \]

The AM-GM inequality reveals a profound connection between the sum (∑) and the product (∏) of positive real numbers. With this insight in mind, let’s explore and solve the following problems:

Let \(x_1, x_2, \dots x_n\) be non-negative and positive real numbers. Can you find a value for \(P=\prod_{i=1}^nx_i\) so that \(S=\sum_{i=1}^n x_i \ge \pi\) ?

Solution

We wish to find a value for \(P\) such that the inequality \(S\ge\pi\) holds.

Consider the following choice for \(P\):

\[ P=\prod_{i=1}^n x_i = (\frac{\pi}{n})^n \]

Now, applying the Arithmetic Mean-Geometric Mean (AM-GM) inequality, we have:

\[ \sum_{i=1}^n x_i \ge n \sqrt[n]{(\frac{\pi}{n})^n} = \pi \]

Q.E.D.

Let \(x,y,a,b \gt 0\), prove that:

\[ \frac{a}{x}+\frac{b}{y} \ge \frac{4(ay+bx)}{(x+y)^2} \]

Solution

We begin by simplifying the left-hand side:

\[ \frac{a}{x}+\frac{b}{y}=\frac{ay+bx}{xy} \]

Thus, the inequality becomes:

\[ \frac{ay+bx}{xy} \ge \frac{4(ay+bx)}{(x+y)^2} \Leftrightarrow \frac{1}{xy} \ge \frac{4}{(x+y)^2} \Leftrightarrow \Bigl(\frac{x+y}{2}\Bigl)^2 \ge xy \]

This is a well-known result from the Arithmetic Mean-Geometric Mean (AM-GM) inequality, which states that:

\[ \Bigl(\frac{x+y}{2}\Bigl)^2 \ge xy \]

Thus, the original inequality holds, with equality if and only if \(x=y\).

Source

The Romanian Math Olympiad

Now, for a bit of fun, let’s tackle a problem that may appear more challenging at first glance. You just need to apply the AM-GM twice.

Let \(a,b,c\) positive real numbers. Prove that:

\[ \frac{1}{x^2+yz}+\frac{1}{y^2+zx}+\frac{1}{z^2+xy} \leq \frac{1}{2}\left(\frac{1}{xy}+\frac{1}{yz}+\frac{1}{zx}\right) \]

Hint 1

Can you apply the AM-GM inequality to the denominator?

Hint 2

Using the AM-GM inequality, observe that:

\[ \frac{1}{\underbrace{x^2+yz}_{\ge 2x\sqrt{xy}}} \le \frac{1}{2x\sqrt{xy}} = \frac{\sqrt{xy}}{2xyz} \]

Apply this result to each term in the sum.

Solution

Applying the AM-GM inequality to each denominator, we obtain:

\[ x^2 + yz \geq 2x\sqrt{yz}, \quad y^2 + zx \geq 2y\sqrt{zx}, \quad z^2 + xy \geq 2z\sqrt{xy}. \]

Taking reciprocals and using the fact that if \(a \geq b > 0\), then \(\frac{1}{a} \leq \frac{1}{b}\), we get:

\[ \frac{1}{x^2+yz} \leq \frac{1}{2x\sqrt{yz}}, \quad \frac{1}{y^2+zx} \leq \frac{1}{2y\sqrt{zx}}, \quad \frac{1}{z^2+xy} \leq \frac{1}{2z\sqrt{xy}}. \]

Summing these inequalities, we obtain:

\[ \frac{1}{x^2+yz} + \frac{1}{y^2+zx} + \frac{1}{z^2+xy} \leq \frac{1}{2} \left( \frac{\sqrt{yz}}{xyz} + \frac{\sqrt{zx}}{xyz} + \frac{\sqrt{xy}}{xyz} \right). \]

Applying the AM-GM inequality again, we observe that:

\[ \sqrt{yz} + \sqrt{zx} + \sqrt{xy} \leq \frac{(x+y) + (y+z) + (z+x)}{2} = x+y+z. \]

Thus, we obtain:

\[ \frac{1}{x^2+yz}+\frac{1}{y^2+zx}+\frac{1}{z^2+xy} \leq \frac{1}{2xyz} (x + y + z). \]

Rewriting the right-hand side in terms of fractions, we get:

\[ \frac{1}{2xyz} (x+y+z) = \frac{1}{2} \left( \frac{1}{xy}+\frac{1}{yz}+\frac{1}{zx} \right). \]

Thus, the desired inequality holds. Equality occurs when \( x = y = z \).

Source

Romanian Math Olympiad, 2006

The following problem was shortlisted for the 1971 International Mathematical Olympiad. While not particularly difficult, it requires discovering a clever trick.

Let \(a_1, a_2, a_3, a_4\) be positive real numbers. Prove the the inequality:

\[ \frac{a_1+a_3}{a_1+a_2} + \frac{a_2+a_4}{a_2+a_3} + \frac{a_3+a_1}{a_3+a_4} + \frac{a_4+a_2}{a_4+a_1} \ge 4 \]

Hint 1

A direct consequence of the AM-GM inequality is:

\[ (a+b)^2 \ge 4ab \]

Hint 2

Applying the result from "Hint 1" gives:

\[ 4(a_1+a_2)(a_3+a_4) \leq (a_1+a_2+a_3+a_4)^2 \Leftrightarrow \\ \Leftrightarrow \frac{1}{(a_1+a_2)(a_3+a_4)} \geq \frac{4}{(a_1+a_2+a_3+a_4)^2} \]

Solution

To bring the fractions to a common denominator, rewrite the given sum as:

\[ E = \frac{a_1+a_3}{a_1+a_2} + \frac{a_2+a_4}{a_2+a_3} + \frac{a_3+a_1}{a_3+a_4} + \frac{a_4+a_2}{a_4+a_1} = \\ \frac{a_1+a_3}{a_1+a_2} + \frac{a_3+a_1}{a_3+a_4} + \frac{a_2+a_4}{a_2+a_3} + \frac{a_4+a_2}{a_4+a_1} = \\ \frac{(a_1+a_3)(a_1+a_2+a_3+a_4)}{(a_1+a_2)(a_3+a_4)} + \frac{(a_2+a_4)(a_1+a_2+a_3+a_4)}{(a_4+a_1)(a_2+a_3)} \]

Using the AM-GM inequality (see Hint 1 and Hint 2):

\[ \begin{cases} \frac{1}{(a_1+a_2)(a_3+a_4)} \ge \frac{4}{(a_1+a_2+a_3+a_4)^2} \\ \frac{1}{(a_1+a_4)(a_2+a_3)} \ge \frac{4}{(a_1+a_2+a_3+a_4)^2} \end{cases} \]

Substituting these bounds into the expression for \(E\):

\[ E \ge \frac{4(a_1+a_3)(a_1+a_2+a_3+a_4)}{(a_1+a_2+a_3+a_4)^2} + \frac{4(a_2+a_4)(a_1+a_2+a_3+a_4)}{(a_1+a_2+a_3+a_4)^2} = \\ = \frac{4(a_1+a_3)}{a_1+a_2+a_3+a_4} + \frac{4(a_2+a_4)}{a_1+a_2+a_3+a_4} = \frac{4(a_1+a_2+a_3+a_4)}{a_1+a_2+a_3+a_4} = 4 \]

Thus, the inequality is proven.

When does the equality hold?

Source

IMO 1971, Short List

For the next exercise the key idea is to leverage the additional conditions provided and incorporate them into your proof of the main inequality.

Let \(a,b,c\) positive real numbers such that \(abc=1\). Prove that:

\[ \frac{c+ab+1}{1+a+a^2}+\frac{a+bc+1}{1+b+b^2}+\frac{b+ca+1}{1+c+c^2} \ge 3 \]

Hint 1

Since \(abc=1\), we can rewrite the terms as:

\[ \frac{c+ab+1}{1+a+a^2}=\frac{abc^2+ab+abc}{1+a+a^2} \]

Solution

Given \(abc=1\) we can express the sum as:

\[ \frac{c+ab+1}{1+a+a^2}+\frac{a+bc+1}{1+b+b^2}+\frac{b+ca+1}{1+c+c^2} = \] \[ = \frac{abc^2+ab+abc}{1+a+a^2}+\frac{a^2bc+bc+abc}{1+b+b^2}+\frac{ab^2c+ca+abc}{1+c+c^2} = \] \[ = ab(\frac{1+c+c^2}{1+a+a^2})+bc(\frac{1+a+a^2}{1+b+b^2})+ca(\frac{1+b+b^2}{1+c+c^2}) \]

By the Arithmetic Mean-Geometric Mean (AM-GM) inequality, we have:

\[ ab(\frac{1+c+c^2}{1+a+a^2})+bc(\frac{1+a+a^2}{1+b+b^2})+ca(\frac{1+b+b^2}{1+c+c^2}) \overbrace{\ge}^{AM-GM} 3\sqrt{a^2b^2c^2} = 3 \]

Equality holds when \(a=b=c=1\).

Sometimes you can solve “inequations” using “inequalities”:

Find \(x,y,z \in [\frac{1}{3}, \infty)\) such that \(x+y+z \leq 4\) and \(\sqrt{3x-1}+\sqrt{3y-1}+\sqrt{3z-1} \geq 3\sqrt{3}\).

Hint 1

Notice that the second inequality can be rewritten as:

\[ \sqrt{x-\frac{1}{3}}+\sqrt{x-\frac{1}{3}}+\sqrt{x-\frac{1}{3}} \geq 3 \]

Solution

The key intuition for problems like this is to "trap" the value of the given sum between constants to force equality. Specifically, we aim to find a real number \(k\) such that:

\[ k \leq \sqrt{3x-1}+\sqrt{3y-1}+\sqrt{3z-1} \leq k \]

Once we achieve this, we can determine the values of \(x, y, z\) by analyzing the equality conditions.

Then we can "force" the equality conditions and find the numbers.

First, notice that the second inequality transforms as follows:

\[ \sqrt{3x-1}+\sqrt{3y-1}+\sqrt{3z-1} \geq 3\sqrt{3} \Leftrightarrow \] \[ \Leftrightarrow \sqrt{x-\frac{1}{3}} + \sqrt{y-\frac{1}{3}} + \sqrt{z-\frac{1}{3}} \geq 3 \tag{1} \]

Now, we can apply the AM-GM inequality (Arithmetic Mean-Geometric Mean Inequality) in a "forced" way. Remember that for each positive number \(a\), \(\sqrt{a} \leq \frac{a+1}{2}\) Applying this to each term separately, we get:

\[ \sqrt{x-\frac{1}{3}} \leq \frac{x-\frac{1}{3}+1}{2} = \frac{3x+2}{6} \tag{2} \] \[ \sqrt{y-\frac{1}{3}} \leq \frac{y-\frac{1}{3}+1}{2} = \frac{3y+2}{6} \tag{3} \] \[ \sqrt{z-\frac{1}{3}} \leq \frac{z-\frac{1}{3}+1}{2} = \frac{3z+2}{6} \tag{4} \]

Adding inequalities \((2)\), \((3)\), (\(4\)) and using the condition \(x+y+z \leq 4\), we further obtain:

\[ \sqrt{x-\frac{1}{3}} + \sqrt{y-\frac{1}{3}} + \sqrt{z-\frac{1}{3}} \leq \frac{3(\overbrace{x+y+z}^{\leq 4})+6}{6} \leq 3 \tag{5} \]

From \((1)\) and \((5)\), it follows that:

\[ 3 \leq \sqrt{x-\frac{1}{3}} + \sqrt{y-\frac{1}{3}} + \sqrt{z-\frac{1}{3}} \leq 3 \]

Thus, equality must occur throughout. In particular, equality must hold when applying AM-GM, meaning that:

\[ x-\frac{1}{3} = 1 \Rightarrow x=\frac{4}{3} \] \[ y-\frac{1}{3} = 1 \Rightarrow y=\frac{4}{3} \] \[ z-\frac{1}{3} = 1 \Rightarrow z=\frac{4}{3} \]

Therefore, the only solution is \(x = y = z = \frac{4}{3}\).

Source

Gheorghe F. Molea

Cyclic and Symmetrical Inequalities

Before proceeding further, let’s familiarize ourselves with two important notions: cyclic inequalities and symmetrical inequalities.

A cyclic inequality involves a set of variables arranged in a cyclic order, where each term follows a repeating pattern by “rotating” the variables. For instance, for three variables we perform the transformation:

\[ a \rightarrow b, \quad b \rightarrow c, \quad c \rightarrow a \]

The cyclic behavior can be expressed using the notation:

\[ \sum_{\text{cyc}} f(a,b,c) = f(a,b,c) + f(b,c,a) + f(c,a,b) \]

Here are some examples that illustrate cyclic sums and their corresponding inequalities:

\[ \sum_{\text{cyc}} a^2 = a^2+b^2+c^2 \overbrace{\geq}^{AM-GM} 3\sqrt[3]{(abc)^2} \] \[ \sum_{\text{cyc}} \frac{a}{b} = \frac{a}{b} + \frac{b}{c} + \frac{c}{a} \overbrace{\geq}^{AM-GM} 3 \] \[ \sum_{\text{cyc}} a^3b^2c = a^3b^2c + b^3c^2a + c^3a^2b \overbrace{\geq}^{AM-GM} 3(abc)^2 \] \[ \sum_{\text{cyc}} \frac{c+ab+1}{1+a+a^2} = \frac{c+ab+1}{1+a+a^2}+\frac{a+bc+1}{1+b+b^2}+\frac{b+ca+1}{1+c+c^2} \ge 3 \]

In contrast, a symmetrical inequality is one that remains unchanged under any permutation of its variables. A function \(f(a,b,c)\) is said to be symmetric if it satisfies:

\[ \underbrace{f(a,b,c)=f(a,c,b)=f(b,a,c)=f(b,c,a)=f(c,a,b)=f(c,b,a)}_{3! \quad \text{permutations}} \]

In other words, any swap or rearrangement of \(a, b, c\) leaves the function invariant. This complete symmetry is denoted by the notation: \(\sum_{\text{sym}}\), which indicates summing over all distinct permutations of the variables.

Consider the following examples:

\[ \sum_{\text{sym}} a = a + a + b + b + c + c \overbrace{\geq}^{AM-GM} 6\cdot\sqrt[3]{abc} \] \[ \sum_{\text{sym}} a^2b = a^2b + a^2c + b^2c + b^2a + c^2a + c^2b \overbrace{\geq}^{AM-GM} 6 \cdot abc \]

To highlight the difference, compare the following two sums:

Another comparison:

These examples illustrate how the cyclic and symmetrical sum notations capture different patterns of symmetry within inequalities. While cyclic sums rotate the variables in a fixed order, symmetrical sums account for every possible permutation, reflecting complete invariance under any swap of the variables.

Grouping terms

Solving more complex inequality problems requires more than just applying the general formula. A common approach involves strategically grouping terms to our advantage, then applying the Arithmetic Mean-Geometric Mean (AM-GM) inequality—or another relevant inequality—to each group. Finally, we combine the resulting inequalities to form a larger, more powerful inequality.

With practice, this technique will become second nature. However, at first glance, it may seem unintuitive.

Can you solve the following problems without relying on any hints?

Let \( a,b,c \in \mathbb{R}_{+} \). Prove the inequality:

\[ (a^2+bc)(b^2+ca)(c^2+ab) \ge 8(abc)^2 \]

Hint 1

Apply the Arithmetic Mean-Geometric Mean (AM-GM) inequality to each pair of terms as follows:

\(a^2+bc\ge2\sqrt{a^2bc}\)

Solution

This is a classic problem where we group terms and apply the AM-GM inequality to each group individually. First, we apply the AM-GM inequality to each pair of terms:

\[ \begin{cases} a^2+bc\ge2\sqrt{a^2bc} = 2a\sqrt{bc} \\ b^2+ac\ge2\sqrt{b^2ac} = 2b\sqrt{ac} \\ c^2+ab\ge2\sqrt{c^2ab} = 2c\sqrt{ab} \end{cases} \]

Next, multiplying these inequalities together (since all terms are positive), we get:

\[ \underbrace{(a^2+bc)}_{\ge 2a\sqrt{bc}}\underbrace{(b^2+ac)}_{\ge 2b\sqrt{ac}}\underbrace{(c^2+ab)}_{\ge 2c\sqrt{ab}}\ge8abc\sqrt{a^2b^2c^2}=8(abc)^2 \]

Thus, the inequality is proven.

Equality holds when \(a=b=c=1\).

Let \(x,y,z\) positive real numbers, and \((1+x)(1+y)(1+z)=8\), prove that \(xyz \le 1\).

Hint 1

The terms in the product are already grouped for us.

Solution

We apply the Arithmetic Mean-Geometric Mean (AM-GM) inequality to each term in the product:

\[ (1+x) \ge 2\sqrt{x}, (1+y) \ge 2\sqrt{y} \text{ and } (1+z) \ge 2\sqrt{z} \]

Thus, we have:

\[ 8 = \underbrace{(1+x)}_{\ge 2\sqrt{x}}\underbrace{(1+y)}_{\ge 2\sqrt{y}}\underbrace{(1+z)}_{\ge2\sqrt{z}} \ge 8\sqrt{xyz} \]

Since we are given \((1+x)(1+y)(1+z)=8\), it follows that:

\[ 8 \ge 8 \sqrt{xyz} \Leftrightarrow 1 \ge xyz \]

Hence, the inequality is proven. Equality holds true when \(x=y=z=1\).

Let \(x_i \in \mathbb{R}_{+}\), where \(n\) is an even natural number and \(\prod_{i=1}^nx_i=1\). Prove that:

\((x_1^2+x_2^2)(x_3^2+x_4^2)\dots(x_{n-1}^2+x_{n}^2)\ge 2^{\frac{n}{2}}\)

Hint 1

The terms are already grouped for us. Consider applying the Arithmetic Mean-Geometric Mean (AM-GM) inequality to each group.

Solution

We apply the AM-GM inequality to each pair of terms:

\[ \begin{cases} x_1^2+x_2^2 \ge 2x_1x_2 \\ x_3^2+x_4^2 \ge 2x_3x_4 \\ \dots \\ x_{n-1}^2+x^{n} \ge 2x_{n-1}x_n \end{cases} \]

In total there are \(\frac{n}{2}\) groups. Thus, we have:

\[ (x_1^2+x_2^2)\dots(x_{n-1}^2+x_{n}^2)\ge(\underbrace{2*\dots*2}_{\frac{n}{2}})\sqrt{\prod_{i=1}^n x_i} \]

Since \(\prod_{i=1}^{n}x_i=1\), this simplifies to:

\[ (x_1^2+x_2^2)\dots(x_{n-1}^2+x_{n}^2)\ge 2^{\frac{n}{2}} \]

Equality holds when \(x_1=x_2=\dots=x_n=1\).

Let \(x,y,z\) positive real numbers such that \(xyz=6\). Prove the inequality:

\[ \frac{2x}{(2x^2+y^2)(x^2+2z^2)}+\frac{3y}{(3y^2+z^2)(y^2+3x^2)}+\frac{5z}{(5z^2+x^2)(z^2+5y^2)} \leq \frac{1}{8} \]

Hint 1

The denominators are grouped in a way that suggests applying the Arithmetic Mean-Geometric Mean (AM-GM) inequality directly.

Solution

We apply the AM-GM inequality to bound each factor in the denominators:

\[ \frac{2x}{(2x^2+y^2)(x^2+2z^2)} \leq \frac{2x}{2\sqrt{2}xy \cdot 2\sqrt{2}xz} = \frac{1}{4\cdot xyx} = \frac{1}{24} \] \[ \frac{3y}{(3y^2+z^2)(y^2+3x^2)} \leq \frac{3y}{2\sqrt{3}zy \cdot 2\sqrt{3}yx} = \frac{1}{4\cdot xyz} = \frac{1}{24} \] \[ \frac{5z}{(5z^2+x^2)(z^2+5y^2)} \leq \frac{5z}{2\sqrt{5}zx \cdot 2\sqrt{5}zy} = \frac{1}{4\cdot xyz} = \frac{1}{24} \]

Summing the three inequalities proves the original.

Source

Romanian Math Olympiad, Etapa Locala, 9th grade, Galati, 2018

Let \(n\) a natural number greater than \(0\), prove that:

\[ \sqrt{1\cdot2}+\sqrt{2\cdot3}+\dots+\sqrt{n(n+1)} < n(n+1) \]

Solution

We first apply the AM-GM inequality term by term:

\[ \underbrace{\sqrt{1\cdot2}}_{< \frac{1+2}{2}}+\underbrace{\sqrt{2\cdot3}}_{< \frac{2+3}{2}}+\dots+\underbrace{\sqrt{n(n+1)}}_{< \frac{n+n+1}{2}} < \] \[ < 1+2+\dots+n+\frac{n}{2} = \frac{n(n+2)}{2} \overbrace{<}^{?} n(n+1) \]

It remains to show \(\,\frac{n\,(n+2)}{2} < n(n+1)\). Multiply both sides by 2:

\[ n\,(n+2) \;<\; 2n\,(n+1). \]

Dividing by \(n\) (which is positive) simplifies to \(\,n+2 < 2(n+1)\), or \(\,n + 2 < 2n + 2\), which is true for all \(n>0\). Thus \(\,\frac{n\,(n+2)}{2} < n(n+1)\), completing the proof.

Source

Romanian Math Olympiad, Etapa Locala, 8th grade, Caras-Severin, 2013 (Laurentiu Panaitopol)

Let \(n \in \mathbb{N}^{*}\) such that \(n\gt1\), prove the inequality:

\[ n^3+n^2+2n \gt 4\sqrt{n}(1+\sqrt{2}+\dots+\sqrt{n}) \]

Solution

First, factor out terms to reveal a pattern:

\[ n^3+n^2+2n \gt 4\sqrt{n}(1+\sqrt{2}+\dots+\sqrt{n}) \Leftrightarrow \] \[ \Leftrightarrow n\cdot\frac{n(n+1)}{1}+2n \gt 4\left(\sqrt{n}+\sqrt{2n}+\dots+\sqrt{n^2}\right) \]

Dividing both sides by \(2\), the inequality becomes:

\[ n\cdot(1+\dots+n)+(\underbrace{1+\dots+1}_{=n}) \gt 2(\sqrt{n}+\sqrt{2n}+\dots+\sqrt{n\cdot n}) \] \[ (n + 1) + (2n + 1) + \dots + (n\cdot n + 1) \gt 2(\sqrt{n}+\sqrt{2n}+\dots+\sqrt{n\cdot n}) \]

We claim that each group \((k n + 1)\) is at least \(2\sqrt{k n}\) by the AM-GM inequality::

\[ \begin{cases} n+1 \overbrace{\geq}^{\text{AM-GM}} 2\sqrt{n} \\ 2n+1 \overbrace{\geq}^{\text{AM-GM}} 2\sqrt{2n} \\ \dots \\ n\cdot n + 1 \overbrace{\geq}^{\text{AM-GM}} 2\sqrt{n\cdot n} \end{cases} \]

Summing these inequalities for \(k\) from 1 to \(n\) yields exactly the required inequality. When summing the inequalities, the equality condition is lost due to the fact that there's no such \(n\) so that all weak inequalities hold (in the same time).

Source

Romanian Math Olympiad, Etapa Locala, 9th grade, Galati, 2005

The next problem is more difficult to solve but a previous exercise might help:

Let \(n \in \mathbb{N}\setminus\{0,1\}\). Prove the inequality:

\[ \frac{1}{5+2^4}+\frac{1}{5+3^4}+\frac{1}{5+4^4}+\dots+\frac{1}{5+n^4} \lt \frac{n-1}{4\cdot n} \]

Hint 1

In a previous exercise, we proved that: \(a^4+b^4+1 \geq 2b^2a\sqrt{2}\) by applying the AM-GM inequality twice:

\[ a^4+b^4+1 = (a^4+1) + b^4 \geq 2a^2+b^4 \geq 2\sqrt{2}b^2a \]

Hint 2

Observe that each term can be written in the form:

\[ \frac{1}{5+k^4} = \frac{1}{\left(\sqrt{4}\right)^4+k^4 + 1} \leq \text{?} \]

Hint 3

Approximate the resulting sum with a telescoping series.

Solution

From a previous result, we have: \(a^4+b^4+1 \geq 2b^2a\sqrt{2}\) by applying the AM-GM inequality twice:

\[ a^4+b^4+1 = (a^4+1) + b^4 \geq 2a^2+b^4 \geq 2\sqrt{2}b^2a \]

We can apply this giving:

\[ \frac{1}{5+2^4}+\frac{1}{5+3^4}+\frac{1}{5+4^4}+\dots+\frac{1}{5+n^4} = \] \[ = \frac{1}{\left(\sqrt{4}\right)^4+2^4+1}+\frac{1}{\left(\sqrt{4}\right)^4+3^4+1}+\dots+\frac{1}{\left(\sqrt{4}\right)^4+n^4+1} \leq \] \[ \leq \frac{1}{2\cdot\sqrt{2}\cdot 2^2 \cdot \sqrt{2}} + \leq \frac{1}{2\cdot\sqrt{2}\cdot 3^2 \cdot \sqrt{2}} + \dots + \frac{1}{2\cdot\sqrt{2}\cdot n^2 \cdot \sqrt{2}} \leq \] \[ \frac{1}{4}(\frac{1}{2^2}+\frac{1}{3^2}+\dots+\frac{1}{n^2}) \]

So, what we know so far is that:

\[ \begin{align} \sum_{i=2}^n \frac{1}{5+i^4} \leq \frac{1}{4}\left(\sum_{i=2}^n \frac{1}{i^4} \right) \end{align} \]

However, a cleaner bound can be obtained by noting: \( \frac{1}{i^2} < \frac{1}{(i-1)\cdot i}\). Using this in \((1)\) leads to:

\[ \begin{align} \sum_{i=2}^n \frac{1}{5+i^4} \leq \frac{1}{4}\left(\sum_{i=2}^n \frac{1}{i^4} \right) \lt \frac{1}{4} \left(\sum_{i=2}^n \frac{1}{(i-1)\cdot i}\right) \tag{2} \end{align} \]

We now evaluate the telescoping sum:

\[ \frac{1}{4} \left(\sum_{i=2}^n \frac{1}{(i-1)\cdot i}\right) = \frac{1}{4} \left(\frac{1}{1\cdot 2}+\frac{1}{2 \cdot 3} + \dots + \frac{1}{(n-1) \cdot n}\right) = \] \[ \begin{align} = \frac{1}{4}\left(1-\frac{1}{2}+\frac{1}{2}-\frac{1}{3}+\dots+\frac{1}{n-1}-\frac{1}{n}\right) = \frac{1}{4}\left(1-\frac{1}{n}\right) = \frac{n-1}{4\cdot n} \tag{3} \end{align} \]

Therefore, introducing \((3)\) in \((2)\) proves our inequality:

\[ \sum_{i=2}^n \frac{1}{5+i^4} \leq \frac{1}{4}\left(\sum_{i=2}^n \frac{1}{i^4} \right) \lt \frac{1}{4} \left(\sum_{i=2}^n \frac{1}{(i-1)\cdot i}\right) = \frac{n-1}{4\cdot n} \]

Source

Romanian Math Olympiad, Etapa Locala, 8th grade, Galati, 2019

Remember, the key to solving the next problem is to leverage the additional condition to your advantage. While the terms may already be “grouped” for you, this alone won’t be sufficient.

Let \(a,b,c\) positive real numbers such that \(a+b+c=1\). Prove the inequality:

\[ \Bigl(\frac{1}{a}-1\Bigl)\Bigl(\frac{1}{b}-1\Bigl)\Bigl(\frac{1}{c}-1\Bigl) \ge 8 \]

Hint 1

Rewrite each term in the following form:

\[\frac{1}{a}-1=\frac{1-a}{a} \]

Hint 2

Using \(a+b+c=1\), we can express \(a = 1-b-c\), and consequently

\[ \frac{1}{a}-1=\frac{1-a}{a}=\frac{b+c}{a} \]

Solution

Taking the above hints into account, we proceed as follows:

\[ \Bigl(\frac{1}{a}-1\Bigl)\Bigl(\frac{1}{b}-1\Bigl)\Bigl(\frac{1}{c}-1\Bigl) = \frac{\overbrace{b+c}^{\ge2\sqrt{bc}}}{a} * \frac{\overbrace{a+c}^{\ge2\sqrt{ac}}}{b} * \frac{\overbrace{a+b}^{\ge2\sqrt{ab}}}{c} \]

By applying the AM-GM inequality to each term:

\[ b+c \ge 2\sqrt{bc} , a+c \ge 2\sqrt{ac} \text{ and } a+b \ge 2\sqrt{ab} \]

We obtain:

\[ \frac{\overbrace{b+c}^{\ge2\sqrt{bc}}}{a} * \frac{\overbrace{a+c}^{\ge2\sqrt{ac}}}{b} * \frac{\overbrace{a+b}^{\ge2\sqrt{ab}}}{c} \ge \frac{2\sqrt{bc}}{a} * \frac{2\sqrt{ac}}{b} * \frac{2\sqrt{ab}}{c} = \frac{8\sqrt{a^2b^2c^2}}{abc}=8 \]

Equality holds when \(a+b+c=1\) and \(a=b=c\). To satisfy both conditions \(a=b=c=\frac{1}{3}\).

The next problem, proposed by Dorin Marghidanu, is a generalisation of the previous one, but can you “spot” the similarity?

If \(0 \lt a_1, a_2, \dots, a_n \leq 1\), such that \(a_1+a_2+\dots+a_n=n-1\), then:

\[ (n-1)^n \cdot (1-a_1) \cdot (1-a_2) \cdot \dots \cdot (1-a_n) \leq a_1 a_2 \dots a_n \]

Hint 1

This substitution simplifies the inequality into a more recognizable form.

Try letting \(a_i = 1 - b_i\).

In this regard, why don't you write \(a_i=1-b_i\)?

Solution

We define \(a_i = 1 - b_i\). This is valid because \(a_i \in (0, 1]\) implies \(b_i \in [0, 1)\), and since \(a_i > 0\), we know \(b_i < 1\):

\[ n-1=\sum_{i=1}^n a_i = \sum_{i=1}^n \left(1-b_i\right) = n - \sum_{i=1}^n b_i \Rightarrow \] \[ \sum_{i=1}^n b_i = 1 \]

Substituting back into the inequality, the left-hand side becomes:

\[ \left(n-1\right)^n * \prod_{i=1}^n b_i \leq \prod_{i=1}^n \left(1-b_i\right) \Leftrightarrow \]

Now divide both sides by \(\prod b_i\) (which is positive), to get:

\[ \prod_{i=1}^n \left(\frac{1-b_i}{b_i}\right) \geq (n-1)^n \]

This is the key inequality. It can be proven using the AM-GM inequality. Here's the outline:

Each term \(\frac{1 - b_i}{b_i}\) can be written as \(\frac{\sum_{j \ne i} b_j}{b_i}\). Since \(\sum b_i = 1\), we know \(\sum_{j \ne i} b_j = 1 - b_i\):

Using AM-GM on each numerator:

\[ \frac{b_2+b_3+\dots+b_n}{b_1} + \frac{b_1+b_3+\dots+b_n}{b_2} + \dots + \frac{b_1+\dots+b_{n-1}}{b_n} \geq \] \[ \frac{(n-1)\sqrt[(n-1)]{b_2*\dots*b_n}}{b_1} + \dots + \frac{(n-1)\sqrt[(n-1)]{b_1*\dots*b_{n-1}}}{b_n} \]

Using the AM-GM inequality again:

\[ \frac{(n-1)\sqrt[n-1]{b_2*\dots*b_n}}{b_1} + \dots + \frac{(n-1)\sqrt[n]{b_1*\dots*b_{n-1}}}{b_n} \geq \] \[ \frac{(n-1)^n\sqrt[n-1]{\prod_{i=1}^n b_i}}{\prod_{i=1}^n b_i} = (n-1)^n \]

Thus, the inequality is proven.

Source

Dorin Marghidanu

The next problem is another generalisation of an exercise proposed to the Romanian (Olympiad) Team Selection Test from 2002:

Let \(k, x_1, x_2, \dots, x_n \in (0,1)\) such that \(k \gt \max\{x_1, x_2, \dots, x_n\}\). Prove the following inequality:

\[ \sqrt{\prod_{i=1}^n x_i} + \sqrt{\prod_{i=1}^n(k-x_i)} \lt k \]

Hint 1

If \(a\in (0,1)\) then \(\sqrt{a}\lt\sqrt[3]{a}\).

Solution

Since \(x_1, x_2, \dots, x_n \in (0,1)\) and \(k \in (0,1)\) then \(\prod x_i \in (0,1)\) and \(\prod (k-x_i) \in (0,1)\).

Taking this into consideration:

\[ \begin{align} \sqrt{\prod_{i=1}^n x_i} \leq \sqrt[n]{\prod_{i=1}^n x_i} \overbrace{\leq}^{AM-GM} \frac{1}{n} \cdot \sum_{i=1}^n x_i \tag{1} \end{align} \]

In the same time, by applying the AM-GM inequality:

\[ \begin{align} \sqrt{\prod_{i=1}^n(k-x_i)} \leq \sqrt[n]{\prod_{i=1}^n(k-x_i)} \overbrace{\leq}^{AM-GM} \frac{1}{n}\cdot \sum_{i=1}^n (k-x_i) \tag{2} \end{align} \]

After summing \((1)\) and \((2)\), our initial inequality is proven:

\[ \sqrt{\prod_{i=1}^n x_i} + \sqrt{\prod_{i=1}^n(k-x_i)} \leq \frac{1}{n}\cdot \sum_{i=1}^n x_i + \frac{1}{n}\cdot \sum_{i=1}^n (k-x_i) \Leftrightarrow \] \[ \sqrt{\prod_{i=1}^n x_i} + \sqrt{\prod_{i=1}^n(k-x_i)} \leq \frac{1}{n}\cdot \sum_{i=1}^n x_i + k - \frac{1}{n}\cdot \sum_{i=1}^n x_i = k \]

Source

Romanian Team Selection Test, 2002, generalisation

Let \(a,b,c\) be positive real numbers such that \(a^3+b^3+c^3=3\). Prove the inequality:

\[ \frac{a(1-a)}{(1+b)(1+c)} + \frac{b(1-b)}{(1+a)(1+c)} + \frac{c(1-c)}{(1+a)(1+b)} \le 0 \]

Hint 1

Consider rewriting the expression with a common denominator.

Solution

First, we combine the terms over a common denominator:

\[ \frac{a(1-a)}{(1+b)(1+c)} + \frac{b(1-b)}{(1+a)(1+c)} + \frac{c(1-c)}{(1+a)(1+b)} = \\ = \frac{(a+b+c)-(a^3+b^3+c^3)}{(1+a)(1+b)(1+c)} \le 0 \]

Since \((1+a)(1+b)(1+c)\gt 0\), it suffices to show that:

\[ a^3+b^3+c^3 \ge a + b + c \]

We apply the AM-GM inequality to each of the terms::

\[ \begin{cases} a^3 + 1 + 1 \ge 3a \\ b^3 + 1 + 1 \ge 3b \\ c^3 + 1 + 1 \ge 3c \end{cases} \]

Summing these inequalities gives:

\[ (a^3+b^3+c^3)+6 \ge 3(a+b+c) \]

Since \(a^3+b^3+c^3=3\), we have:

\[ 3 + 6 \ge 3 (a+b+c) \Leftrightarrow 3 \ge a+b+c \]

Thus, we conclude:

\[ a^3+b^3+c^3 \ge 3 \ge a+b+c \]

Equality holds when \(a=b=c=1\).

Source

Gheorghe Craciun - Facebook group "Comunitatea Profesorilor De Matematica"

We have already solved the following inequality using a different technique, but can you now prove it again by applying ‘grouping’ and the AM-GM inequality?

Let \(x,y,z\) positive real numbers. Prove that:

\[ x^2+y^2+z^2 \ge xy + yz + zx \]

Hint 1

Multiply both sides of the inequality by \(2\), then group the terms and apply the AM-GM inequality to each group.

Solution

By multiplying both sides of the inequality by \(2\), we obtain the equivalent inequality::

\( \underbrace{(x^2+y^2)}_{\ge 2xy}+\underbrace{(y^2+z^2)}_{\ge 2yz}+\underbrace{(z^2+x^2)}_{\ge 2zx} \ge 2(xy + yz + zx) \Leftrightarrow \\ x^2 + y^2 + z^2 \ge xy + yz + zx \)

Now, applying the AM-GM inequality to each pair of terms:

\[ \begin{cases} x^2 + y^2 \ge 2xy \\ y^2 + z^2 \ge 2yz \\ z^2 + x^2 \ge 2zx \end{cases} \]

Summing the results:

\[ 2(x^2 + y^2 + z^2) \ge 2(xy + yz+zx) \Leftrightarrow x^2 + y^2 + z^2 \ge xy + yz + zx \]

Equality holds when \(x=y=z\).

Let \(x,y,z\) positive real numbers. Prove that

\[ x^2+y^2+z^2 \ge x\sqrt{yz}+y\sqrt{zx}+z\sqrt{xy} \]

Hint 1

We have already proven that: \(x^2+y^2+z^2\ge xy+yz+zx\)

Hint 2

We can apply the AM-GM inequality to the following pairs of terms::

\[xy+yz \ge 2y\sqrt{xz}\]

Solution

First, we observe that we have already proven:

\[ x^2+y^2+z^2 \ge xy + yz + zx \]

Thus, it is sufficient to prove that:

\[ xy + yz + zx \ge x\sqrt{yz}+y\sqrt{zx}+z\sqrt{xy} \]

Applying the AM-GM inequality to the following pairs::

\[ \begin{cases} xy+yz \ge 2y\sqrt{xz} \\ yz+zx \ge 2z\sqrt{xy} \\ zx+xy \ge 2x\sqrt{zy} \end{cases} \]

Summing these inequalities:

\[ 2(xy+yz+zx) \ge 2(x\sqrt{yz}+y\sqrt{zx}+z\sqrt{xy}) \Leftrightarrow \\ xy+yz+zx \ge x\sqrt{yz}+y\sqrt{zx}+z\sqrt{xy} \]

Therefore::

\[ \boldsymbol{x^2+y^2+z^2} \ge xy + yz + zx \ge \boldsymbol{x\sqrt{yz}+y\sqrt{zx}+z\sqrt{xy}} \]

Equality holds for \(x=y=z=1\).

Let \(a,b,c\) positive real numbers, prove:

\[ a^3+b^3+c^3 + 3 \ge a+b+c+ab+bc+ca \]

Hint 1

Consider applying the AM-GM inequality to the following form:

\[x^3+y^3+1 \ge 3xy\]

Hint 2

Alternatively, apply the AM-GM inequality to this form:

\[x^3+1+1 \ge 3x\]

Solution

We begin by applying the AM-GM inequality to the following groups of terms:

\[ \begin{cases} a^3+b^3+1 \ge 3ab \\ b^3+c^3+1 \ge 3bc \\ c^3+a^3+1 \ge 3ca \\ \end{cases} \] and \[ \begin{cases} a^3+1+1 \ge 3a \\ b^3+1+1 \ge 3b \\ c^3+1+1 \ge 3c \end{cases} \]

Now, summing all the inequalities:

\[ 3(a^3+b^3+c^3+3) \ge 3(a+b+c+ab+bc+ca) \Rightarrow \\ \Rightarrow a^3+b^3+c^3 + 3 \ge a + b + c + ab + bc + ca \]

The equality holds if \(a=b=c=1\).

Source

Romanian Math Olympiad, Etapa Locala, 10th grade, Dolj, 2013

Can we make a short generalisation?

Let \(a,b,c,d\) positive real numbers and \(n \in \mathbb{N}^{*}\), prove that:

\[ \frac{a^n+b^n+c^n+d^n}{n} \geq a+b+c+d+2 - 2n \]

Hint 1

Use the AM-GM inequality in a creative way by adding several \(1\)s. For instance, to relate \(a^4\) back to \(a\), you can write:

\[ a^4+1+1+1 \overbrace{\geq}^{\text{AM-GM}} 4\cdot a \]

Generalize this idea to handle \(a^n\) by adding exactly \(\frac{n(n-1)}{2}\), so that the total number of terms matches n when applying AM-GM.

Solution

Apply the following "trick" to each of the variables \(a\), \(b\), \(c\), and \(d\):

\[ a^n + \overbrace{\underbrace{(1+\dots+1)}_{=\frac{(n-1)n}{2}}}^{(n-1)\quad \text{terms}} \overbrace{\geq}^{\text{AM-GM}} n \cdot a \]

Here, by design, you have \(1\) copy of \(a^n\) and \(\frac{n(n-1)}{2}\) copies of \(1\). You then group them in a way that AM–GM has n factors total, producing the factor \(n\,a\) on the right.

Summing these inequalities for \(a, b, c,\) and \(d\) gives:

\[ a^n + b^n + c^n + d^2 + 4 * \frac{(n-1) \cdot n}{2} \geq n \cdot (a+b+c+d) \]

Divide both sides by \(n\):

\[ \frac{\sum_{\text{cyc}} a^n}{n} + 2n-2 \geq \sum_{\text{cyc}} a \Leftrightarrow \] \[ \frac{\sum_{\text{cyc}} a^n}{n} \geq \sum_{\text{cyc}}a + 2-2n \]

This is exactly the desired inequality.

By AM-GM, equality holds only if all the terms we used are equal. In the step \[ a^n + 1 + 1 + \dots + 1 = n\,a, \] it forces \(a^n = 1\) and also each “1” must match \(a^n\). Consequently, \(a = 1\). The same argument applies to \(b, c,\) and \(d\), so all must be 1. Thus the inequality is sharp exactly when \(a=b=c=d=1\).

In a somewhat similar fashion:

Let \(a,b,c \in (0,1]\), and n natural number \(\geq 2\) prove that:

\[ \frac{c}{a^n + b^n + 3n-2} + \frac{a}{b^n+c^n+3n-2} + \frac{b}{c^n+a^n+3n-2} \leq \frac{1}{n} \]

Hint 1

We observe that:

\[ a^n + \underbrace{1+1+\dots+1}_{n-1 \quad \text{terms}} \overbrace{\geq}^{\text{AM-GM}} n \cdot a \]

Solution

For each term:

\[ \frac{c}{a^n + b^n + 3n-2} = \frac{c}{a^n+\underbrace{(1+\dots+1)}_{n-1}+b^n+\underbrace{(1+\dots+1)}_{n-1}+n} \leq \] \[ \frac{c}{an+bn+n} \tag{1} \]

Since \(c \in (0,1)\) then \(n \geq n \cdot c\). Introducing this in \((1)\):

\[ \frac{c}{a^n + b^n + 3n-2} \leq \frac{c}{an+bn+n} \leq \frac{c}{na + nb + nc} = \frac{c}{n(a+b+c)} \]

With this in mind, we can write the inequality as:

\[ \frac{c}{a^n + b^n + 3n-2} + \frac{a}{b^n+c^n+3n-2} + \frac{b}{c^n+a^n+3n-2} \leq \] \[ \frac{c}{n(a+b+c)}+\frac{b}{n(a+b+c)}+\frac{a}{n(a+b+c)} \leq \frac{a+b+c}{n(a+b+c)} = \frac{1}{n} \]

Source

Andrei N. Ciobanu

Let \(a,b,c \in \mathbb{R}_{+}\). Prove that:

\[ a^3+b^3+c^3 \ge \frac{3}{2}(ab+bc+cd-1) \]

Hint 1

Consider applying the AM-GM inequality to the following groups \(\{a^3, b^3, \text{?}\}\), \(\{?, b^3, c^3\}\) and \(\{a^3, ?, c^3\}\)

Solution

We apply the AM-GM inequality to the following terms:

\[ \begin{cases} a^3+b^3+1 \ge 3\sqrt[3]{a^3b^3} = 3ab \\ b^3+c^3+1 \ge 3\sqrt[3]{b^3c^3} = 3bc \\ c^3+a^3+1 \ge 3\sqrt[3]{c^3a^3} = 3ca \end{cases} \]

Next, we sum the inequalities::

\[ 2(a^3+b^3+c^3) + 6 \ge 3(ab+bc+ca) \Leftrightarrow \\ a^3+b^3+c^3 \ge \frac{3}{2}(ab+bc+ca-1) \]

The equality holds if \(a=b=c=1\).

Source

Concursul Gazeta Matematica, 9th grade, 12th edition, Romania

Let \(a,b,c \in \mathbb{R}_+\), prove:

\[ a^3+b^3+c^3 \ge \frac{1}{3} (a+b+c)(ab+bc+ca) \]

Hint 1

Recall that we have already proven the following:

\[a^3+b^3 \ge ab(a+b)\]

Hint 2

By applying the AM-GM inequality, we can also conclude:

\[a^3+b^3+c^3\ge 3abc\]

Solution

We start by applying the inequalities derived earlier:

\[ \begin{cases} a^3 + b^3 \ge ab(a+b) \\ b^3 + c^3 \ge bc(b+c) \\ c^3 + a^3 \ge ca(c+a) \\ \end{cases} \]

Additionally, we have the inequality:

\[ a^3+b^3+c^3 \ge 3abc \]

Next, we sum all of these inequalities:

\[ 3(a^3+b^3+c^3) \ge ab(a+b) + abc + bc(b+c) + abc + ca(c+a) + abc \\ a^3+b^3+c^3 \ge \frac{1}{3}(a+b+c)(ab+bc+ca) \]

Equality holds when \(a=b=c=1\).

The next two problems can be easily solved using an inequality that we will discuss shortly. However, let’s first attempt to solve them using the AM-GM inequality, employing a strategy similar to the one we used earlier:

Let \(x,y,z \in (0, \infty)\). Prove:

\[ \frac{x^3}{y}+\frac{y^3}{z}+\frac{z^3}{x} \ge x^2 + y^2 + z^2 \]

Hint 1

By applying the AM-GM inequality, we know::

\[ \frac{x^3}{y}+xy \ge 2x^2 \]

Solution

We start by applying the AM-GM inequality to each pair of terms:

\[ \begin{cases} \frac{x^3}{y}+xy \ge 2x^2 \\ \frac{y^3}{z}+yz \ge 2y^2 \\ \frac{z^3}{x}+zx \ge 2z^2 \end{cases} \]

Next, summing the inequalities:

\[ \frac{x^3}{y}+\frac{y^3}{z}+\frac{z^3}{x} + (xy+yz+zx) \ge (x^2+y^2+z^2) + (x^2+y^2+z^2) \]

Since \(x^2 + y^2 + z^2 \ge xy + yz + zx\), we obtain:

\[ \frac{x^3}{y}+\frac{y^3}{z}+\frac{z^3}{x} + (xy+yz+zx) \ge (x^2+y^2+z^2) + (x^2+y^2+z^2) \ge \\ \ge (x^2+y^2+z^2) + (xy + yz + zx) \Rightarrow \\ \Rightarrow \frac{x^3}{y}+\frac{y^3}{z}+\frac{z^3}{x} \ge x^2+y^2+z^2 \]

The equality holds if \(x=y=z\).

Source

Concursul Gazeta Matematica si Viitori Olimpici, 9th grade, Edition X, Romania

Let \(x,y,z\) positive real numbers, prove:

\[ \frac{x^2+\sqrt{yz}}{2\sqrt{yz}}+\frac{y^2+\sqrt{zx}}{2\sqrt{zx}}+\frac{z^2+\sqrt{xy}}{2\sqrt{xy}} \ge \sqrt{x}+\sqrt{y}+\sqrt{z} \]

Hint 1

An equivalent way to write the inequality is:

\[ \frac{x^2}{\sqrt{yz}}+\frac{y^2}{\sqrt{zx}}+\frac{z^2}{\sqrt{xy}}+3 \ge 2(\sqrt{x}+\sqrt{y}+\sqrt{z}) \]

Solution

We begin by rewriting the inequality as:

\[ \frac{x^2}{\sqrt{yz}}+\frac{y^2}{\sqrt{zx}}+\frac{z^2}{\sqrt{xy}}+3 \ge 2(\sqrt{x}+\sqrt{y}+\sqrt{z}) \]

Next, we apply the AM-GM inequality to the following terms:

\[ \begin{cases} \frac{x^2}{\sqrt{yz}}+\sqrt{y}+\sqrt{z}+1 \ge 4\sqrt{x} \\ \frac{y^2}{\sqrt{zx}}+\sqrt{z}+\sqrt{x}+1 \ge 4\sqrt{y} \\ \frac{z^2}{\sqrt{xy}}+\sqrt{x}+\sqrt{y}+1 \ge 4\sqrt{z} \end{cases} \]

Summing all the inequalities leads to the desired result.

The equality holds when \(x=y=z=1\).

An important thing to take in consideration is that when we sum/multiply weak inequalities involving interdependent terms, we need to verify conditions across the inequalities to check if they remain consistent:

Let \( a,b,c \in> \mathbb{R}_{+} \) such that \(ab+bc+ca=1\). Prove that:

\[ a+b+c\gt\frac{2}{3}(\sqrt{1-ab}+\sqrt{1-bc}+\sqrt{1-ac}) \]

Hint 1

Apply the Arithmetic Mean-Geometric Mean (AM-GM) inequality in the following manner (for all terms):

\(\frac{a+(b+c)}{2}\ge\sqrt{a(b+c)}\)

Hint 2

Consider the fact that \(ab+bc+ca=1\). How might this help us?

Solution

We begin by grouping the terms and applying the AM-GM inequality three times:

\[ \begin{cases} \frac{a+(b+c)}{2} \ge \sqrt{a(b+c)} = \sqrt{ab+ac} \\ \frac{b+(a+c)}{2} \ge \sqrt{b(a+c)} = \sqrt{ba+bc} \\ \frac{c+(a+b)}{2} \ge \sqrt{c(a+b)} = \sqrt{ca+cb} \end{cases} \]

Next, we sum all the inequalities:

\[ \frac{3(a+b+c)}{2}\gt\sqrt{ab+bc}+\sqrt{ba+bc}+\sqrt{ca+bc} \]

Using the condition \(ab+bc+ca=1\), we can substitute the terms:

\[ \frac{3(a+b+c)}{2}\gt\sqrt{1-bc}+\sqrt{1-ac}+\sqrt{1-ab} \Leftrightarrow \\ a+b+c\gt\frac{2}{3}(\sqrt{1-bc}+\sqrt{1-ac}+\sqrt{1-ab}) \]

Furthermore, since \(a,b,c\) are positive real numbers, equality cannot hold.

Source

Andrei N. Ciobanu

Sometimes, we need to find creative ways to group terms. If you’re unable to find the solution right away, don’t worry—this inequality is quite challenging to solve using only the AM-GM inequality.

Let \(a,b,c \in (0,\infty)\) such that \(bc+ac+ca=abc\). Prove that:

\[ 3\sqrt{abc} \gt 2\sqrt{2}(\sqrt{a}+\sqrt{b}+\sqrt{c}) \]

Hint 1

Can you show that::

\[ \frac{1}{a}+\frac{1}{b}+\frac{1}{c}=1 \]

Hint 2

Consider applying the AM-GM inequality to the following groupings:

\[ \begin{cases} \frac{1}{a}+\frac{1}{a}+\frac{1}{b} = \frac{2}{a}+\frac{1}{b} \ge 2\sqrt{\frac{2}{ab}} \\ \frac{1}{b}+\frac{1}{b}+\frac{1}{c} = \frac{2}{b}+\frac{1}{c} \ge 2\sqrt{\frac{2}{bc}} \\ \frac{1}{c}+\frac{1}{c}+\frac{1}{a} = \frac{2}{c}+\frac{1}{a} \ge 2\sqrt{\frac{2}{ca}} \end{cases} \]

Solution

Start by dividing both sides of the equation \(abc=bc+ac+ab\) by \(abc\), which yields:

\[ \frac{1}{a}+\frac{1}{b}+\frac{1}{c}=1 \]

Next, apply the AM-GM inequality to the following groupings of terms::

\[ \frac{1}{a}+\frac{1}{a}+\frac{1}{b} = \frac{2}{a}+\frac{1}{b} \ge 2\sqrt{\frac{2}{ab}} \\ \frac{1}{b}+\frac{1}{b}+\frac{1}{c} = \frac{2}{b}+\frac{1}{c} \ge 2\sqrt{\frac{2}{bc}} \\ \frac{1}{c}+\frac{1}{c}+\frac{1}{a} = \frac{2}{c}+\frac{1}{a} \ge 2\sqrt{\frac{2}{ca}} \]

Summing these inequalities results in:

\[ 3(\frac{1}{a}+\frac{1}{b}+\frac{1}{c}) \gt 2\sqrt{2}(\frac{1}{\sqrt{ab}} + \frac{1}{\sqrt{bc}}+\frac{1}{\sqrt{ca}}) \Leftrightarrow \\ 3 \gt 2\sqrt{2}(\frac{1}{\sqrt{ab}} + \frac{1}{\sqrt{bc}}+\frac{1}{\sqrt{ca}}) \Leftrightarrow \\ 3\sqrt{abc} \gt 2\sqrt{2}(\sqrt{c}+\sqrt{a}+\sqrt{b}) \]

Thus, the inequality holds.

Source

Andrei N. Ciobanu

Problems can become even more elegant when we apply strategic grouping to well-known identities. In this context, try solving the following exercise without relying on any hints:

Let \(a,b\in(0,\infty)\) and \(a-b\gt0\). Prove that:

\[a^3+b^3\gt 4ab\sqrt{b(a-b)}\]

Hint 1

Find an identity involving \(a^3+b^3\) and apply the AM-GM inequality.

Solution

We begin with the identity for the sum of cubes:

\[ a^3+b^3=(a+b)(a^2-ab+b^2) \]

Now, apply the AM-GM inequality to each factor:

\[ a^3+b^3=(\underbrace{a+b}_{\gt 2\sqrt{ab}})[\underbrace{(a^2-ab)+b^2}_{\gt 2b\sqrt{a(a-b)}}] \]

Since equality holds in AM-GM only if \(a=b\), and we know \(a-b\gt0\) the equality condition cannot be satisfied.

Multiplying the inequalities:

\[ a^3+b^3=(a+b)(a^2-ab+b^2) \gt 2\sqrt{ab} 2b \sqrt{a(a-b)} \Leftrightarrow \\ a^3+b^3 \gt 4ab\sqrt{b(a-b)} \]

Source

Andrei N. Ciobanu

At the end of this section, let’s refocus on some elegant weak inequalities:

Let \(x_1, x_2, \dots, x_n\) be positive real numbers. Prove that:

\[ 1+\sum_{j=2}^n[(\sum_{i=1}^j x_i) * (\sum_{i=1}^j \frac{1}{x_i})] \ge \frac{n(n+1)(2n+1)}{6} \]

Hint 1

Recall the formula for the sum of squares of the first \(n\) positive integers:

\[ 1^2+2^2+\dots+n^2 = \frac{n(n+1)(2n+1)}{6} \]

Hint 2

Expand the given expression as follows:

\[ 1+(x_1+x_2)(\frac{1}{x_1}+\frac{1}{x_2})+\dots+(x_1+\dots+x_n)(\frac{1}{x_1}+\dots+\frac{1}{x_n}) \ge \\ \ge \frac{n(n+1)(2n+1)}{6} \]

Solution

We begin by applying the Arithmetic Mean-Geometric Mean (AM-GM) inequality to the following expressions:

\[ \begin{cases} x_1+x_2+\dots+x_n \ge n\sqrt[n]{x_1 * x_2 * \dots * x_n} \\ \frac{1}{x_1}+\frac{1}{x_2}+\dots+\frac{1}{x_n} \ge n\sqrt[n]{\frac{1}{x_1 * x_2 * \dots * x_n}} \end{cases} \]

Equality holds in both cases when \(x_1=x_2=\dots=x_n\). Thus, multiplying the two inequalities gives:

\[ (x_1+x_2+\dots+x_n)(\frac{1}{x_1}+\frac{1}{x_2}+\dots+\frac{1}{x_n}) \ge n^2 \sqrt[n]{\frac{x_1*x_2*\dots*x_n}{x_1*x^2*\dots*x_n}} = n^2 \]

Now, consider the terms in the original sum:

\[ 1+\underbrace{(x_1+x_2)(\frac{1}{x_1}+\frac{1}{x_2})}_{\ge 2^2}+\dots+\underbrace{(x_1+\dots+x_n)(\frac{1}{x_1}+\dots+\frac{1}{x_n})}_{\ge n^2} \ge \\ \ge 1 + \sum_{i=2}^n i^2 \ge \frac{n(n+1)(2n+1)}{6} \]

Therefore, the inequality is proven, and equality holds when \(x_1=x_2=\dots=x_n\).

Source

Andrei N. Ciobanu

Let \(n\in\mathbb{N}^{*}\) and \(x_1,\dots,x_n \in (0, \infty)\), satisfying the conditions:

\(S_1=\sum_{i=1}^n x_i = 9\) and \(S_2=\sum_{i=1}^n\frac{1}{x_i}=1\)

Find \(x_1,\dots,x_n\).

Hint 1

Can you use some techniques from the previous problem?

Solution

We apply the Arithmetic Mean-Geometric Mean (AM-GM) inequality to \(S_1\) and \(S_2\) and then multiply the two inequalities:

\[ S_1 * S_2 \ge n\sqrt[n]{x_1*\dots*x_n}*n\sqrt[n]{\frac{1}{x_1}*\dots*\frac{1}{x_n}} = n^2 \]

Thus we have:

\[ 9 \ge n^2 \Rightarrow n\in\{1,2,3\} \]

For \(n=1\) it is impossible because there is no \(x_1\) such that \(x_1=9\) and \(\frac{1}{x_1}=1\).

For \(n=2\) we need to solve the following system of equations:

\[ \begin{cases} x_1+x_2=9 \\ \frac{1}{x_1}+\frac{1}{x_2}=1 \end{cases} \]

Solving this system, we find two solutions:

\[ (x_1, x_2)\in\{(\frac{9+3\sqrt{5}}{2}, \frac{9-\sqrt{3}}{5}),(\frac{9-3\sqrt{5}}{2}, \frac{9+\sqrt{3}}{5})\} \]

For \(n=3\) the inequality becomes an equality, so \(x_1=x_2=x_3=3\).

Source

Concursul Gazeta Matematica, 6th Edition, 9th grade, Romania